- About This Manual

- I Introduction

- II Managing Virtual Machines with

libvirt - III Hypervisor-Independent Features

- IV Managing Virtual Machines with Xen

- 19 Setting Up a Virtual Machine Host

- 20 Virtual Networking

- 21 Managing a Virtualization Environment

- 22 Block Devices in Xen

- 23 Virtualization: Configuration Options and Settings

- 24 Administrative Tasks

- 25 XenStore: Configuration Database Shared between Domains

- 26 Xen as a High-Availability Virtualization Host

- V Managing Virtual Machines with QEMU

- VI Managing Virtual Machines with LXC

- Glossary

- A Appendix

- B XM, XL Toolstacks and Libvirt framework

- C GNU Licenses

openSUSE Leap 15.2

Virtualization Guide

Describes virtualization technology in general, and introduces libvirt—the unified interface to virtualization—and detailed information on specific hypervisors.

- About This Manual

- I Introduction

- II Managing Virtual Machines with

libvirt - 6 Starting and Stopping

libvirtd - 7 Guest Installation

- 8 Basic VM Guest Management

- 9 Connecting and Authorizing

- 10 Managing Storage

- 11 Managing Networks

- 12 Configuring Virtual Machines with Virtual Machine Manager

- 12.1 Machine Setup

- 12.2 Storage

- 12.3 Controllers

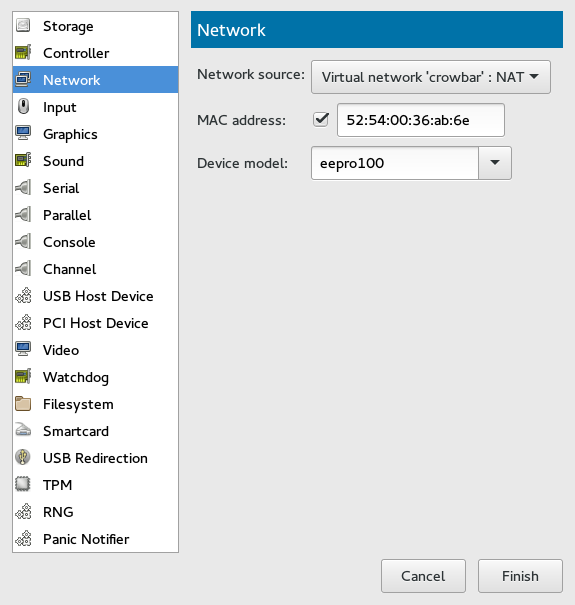

- 12.4 Networking

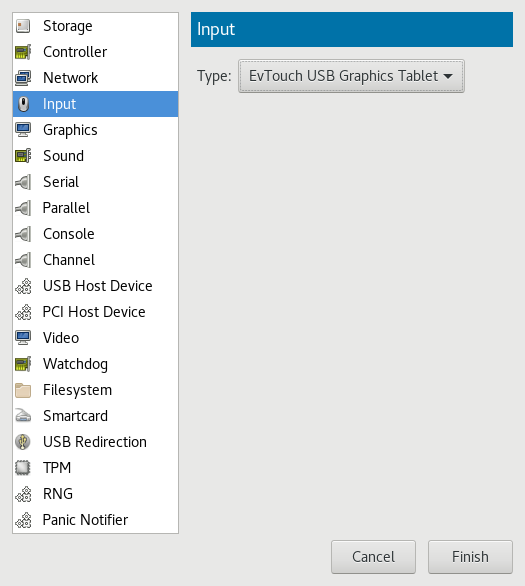

- 12.5 Input Devices

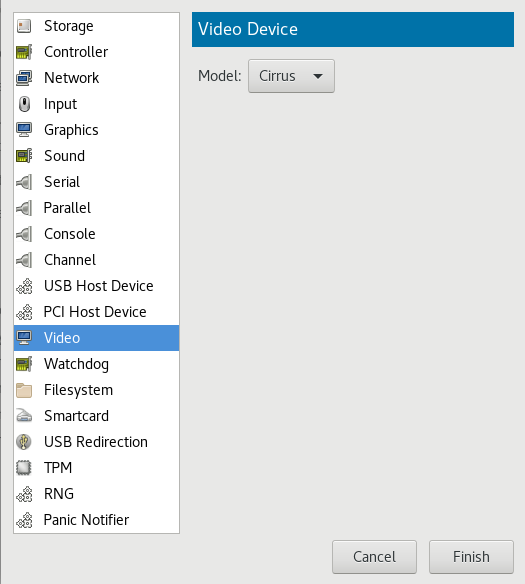

- 12.6 Video

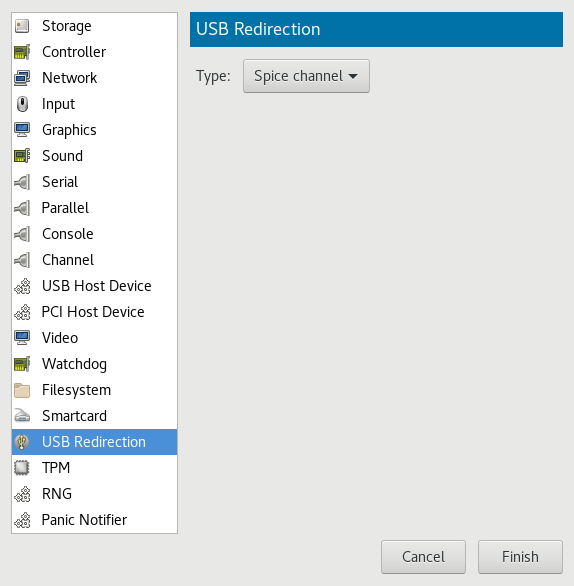

- 12.7 USB Redirectors

- 12.8 Miscellaneous

- 12.9 Adding a CD/DVD-ROM Device with Virtual Machine Manager

- 12.10 Adding a Floppy Device with Virtual Machine Manager

- 12.11 Ejecting and Changing Floppy or CD/DVD-ROM Media with Virtual Machine Manager

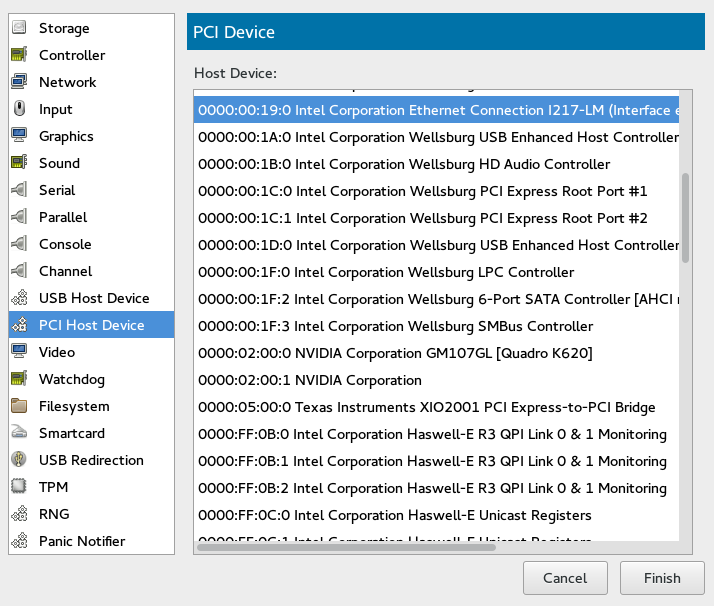

- 12.12 Assigning a Host PCI Device to a VM Guest

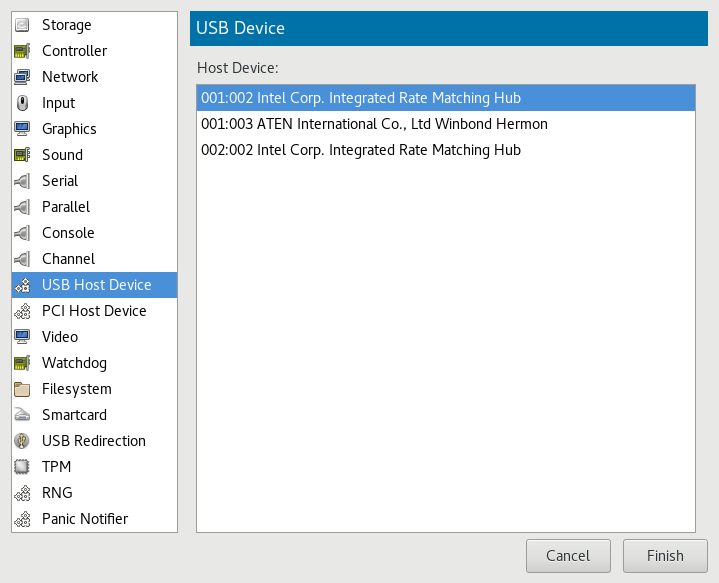

- 12.13 Assigning a Host USB Device to a VM Guest

- 13 Configuring Virtual Machines with

virsh - 13.1 Editing the VM Configuration

- 13.2 Changing the Machine Type

- 13.3 Configuring Hypervisor Features

- 13.4 Configuring CPU Allocation

- 13.5 Changing Boot Options

- 13.6 Configuring Memory Allocation

- 13.7 Adding a PCI Device

- 13.8 Adding a USB Device

- 13.9 Adding SR-IOV Devices

- 13.10 Listing Attached Devices

- 13.11 Configuring Storage Devices

- 13.12 Configuring Controller Devices

- 13.13 Configuring Video Devices

- 13.14 Configuring Network Devices

- 13.15 Using Macvtap to Share VM Host Server Network Interfaces

- 13.16 Disabling a Memory Balloon Device

- 13.17 Configuring Multiple Monitors (Dual Head)

- 14 Managing Virtual Machines with Vagrant

- 6 Starting and Stopping

- III Hypervisor-Independent Features

- IV Managing Virtual Machines with Xen

- 19 Setting Up a Virtual Machine Host

- 20 Virtual Networking

- 21 Managing a Virtualization Environment

- 22 Block Devices in Xen

- 23 Virtualization: Configuration Options and Settings

- 24 Administrative Tasks

- 25 XenStore: Configuration Database Shared between Domains

- 26 Xen as a High-Availability Virtualization Host

- V Managing Virtual Machines with QEMU

- 27 QEMU Overview

- 28 Setting Up a KVM VM Host Server

- 29 Guest Installation

- 30 Running Virtual Machines with qemu-system-ARCH

- 31 Virtual Machine Administration Using QEMU Monitor

- 31.1 Accessing Monitor Console

- 31.2 Getting Information about the Guest System

- 31.3 Changing VNC Password

- 31.4 Managing Devices

- 31.5 Controlling Keyboard and Mouse

- 31.6 Changing Available Memory

- 31.7 Dumping Virtual Machine Memory

- 31.8 Managing Virtual Machine Snapshots

- 31.9 Suspending and Resuming Virtual Machine Execution

- 31.10 Live Migration

- 31.11 QMP - QEMU Machine Protocol

- VI Managing Virtual Machines with LXC

- Glossary

- A Appendix

- B XM, XL Toolstacks and Libvirt framework

- C GNU Licenses

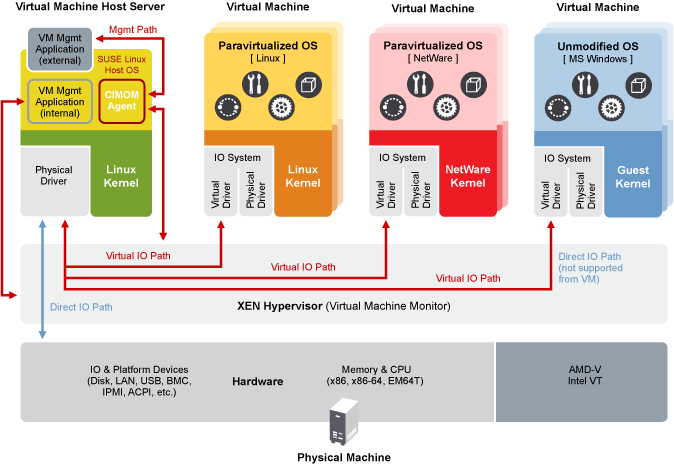

- 2.1 Xen Virtualization Architecture

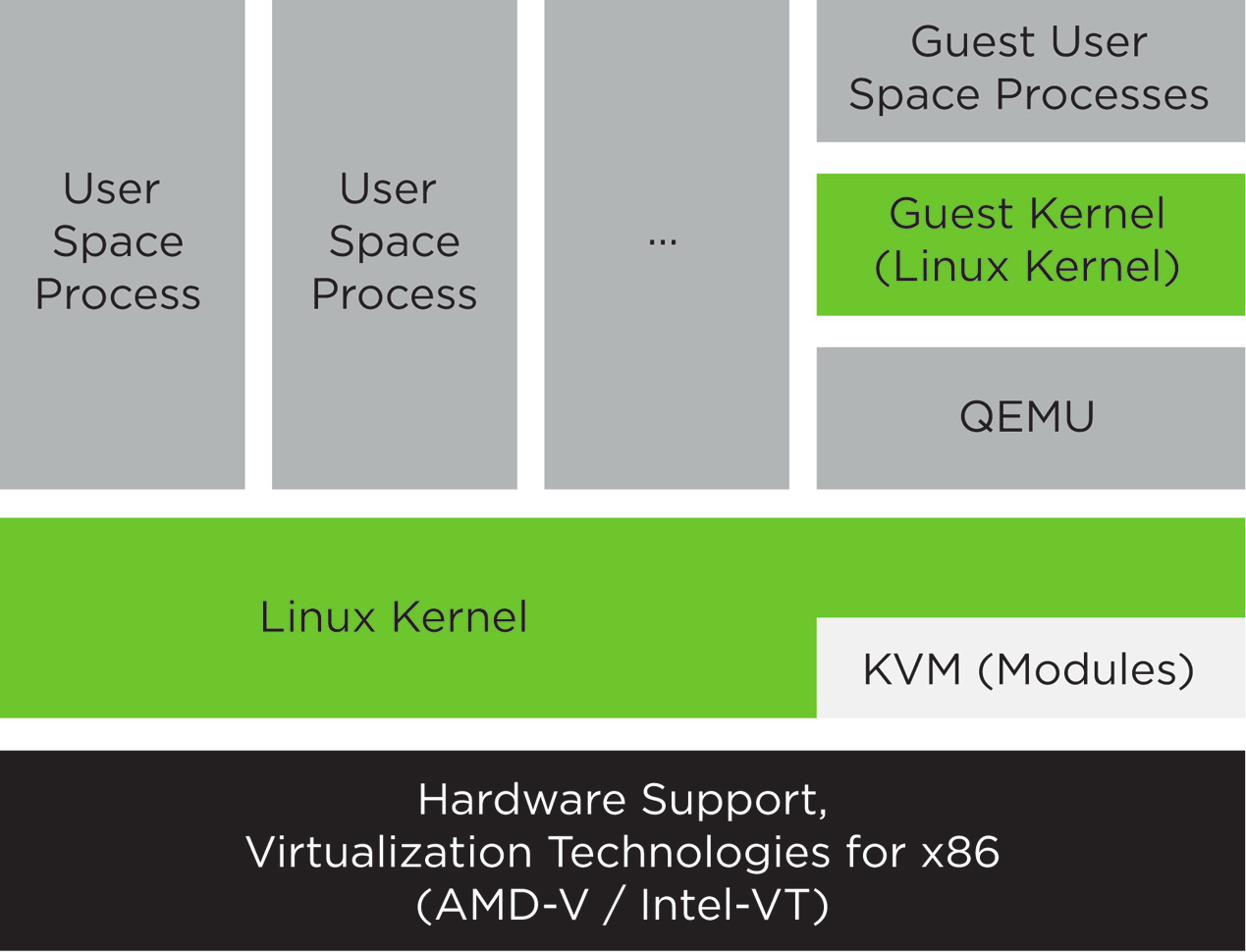

- 3.1 KVM Virtualization Architecture

- 11.1 Connection Details

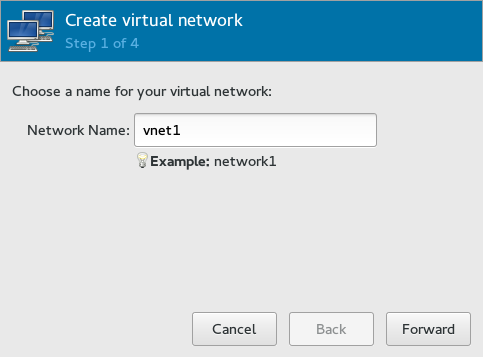

- 11.2 Create virtual network

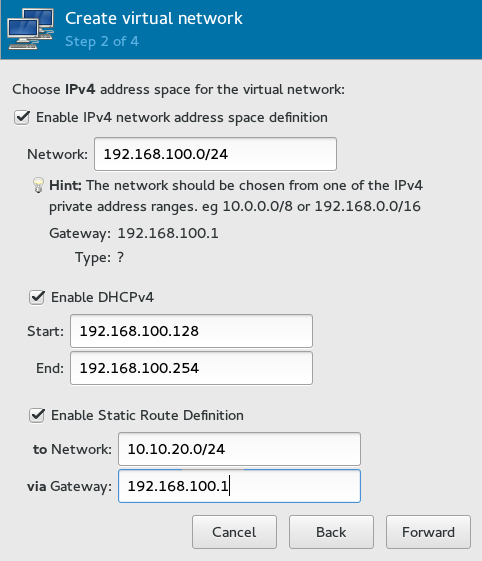

- 11.3 Create virtual network

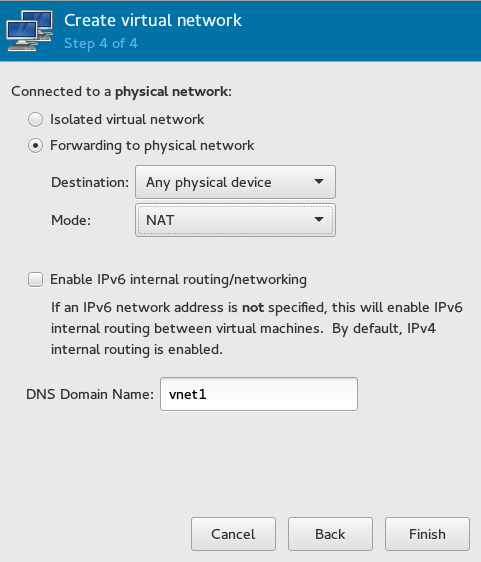

- 11.4 Create virtual network

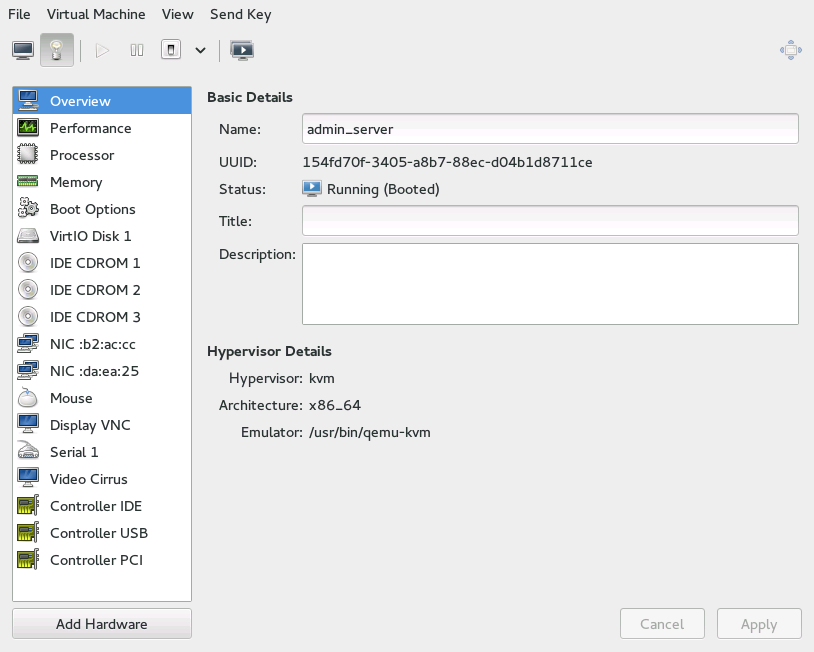

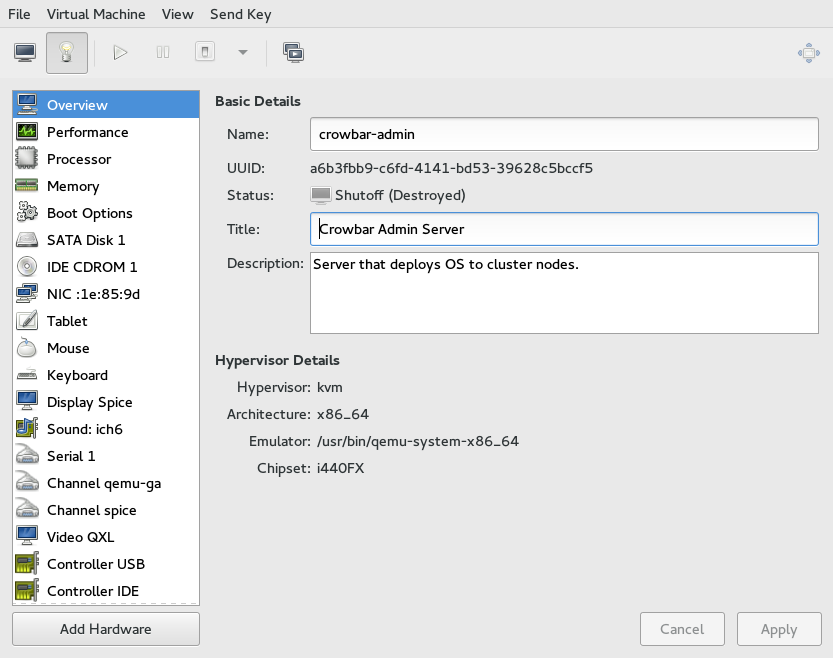

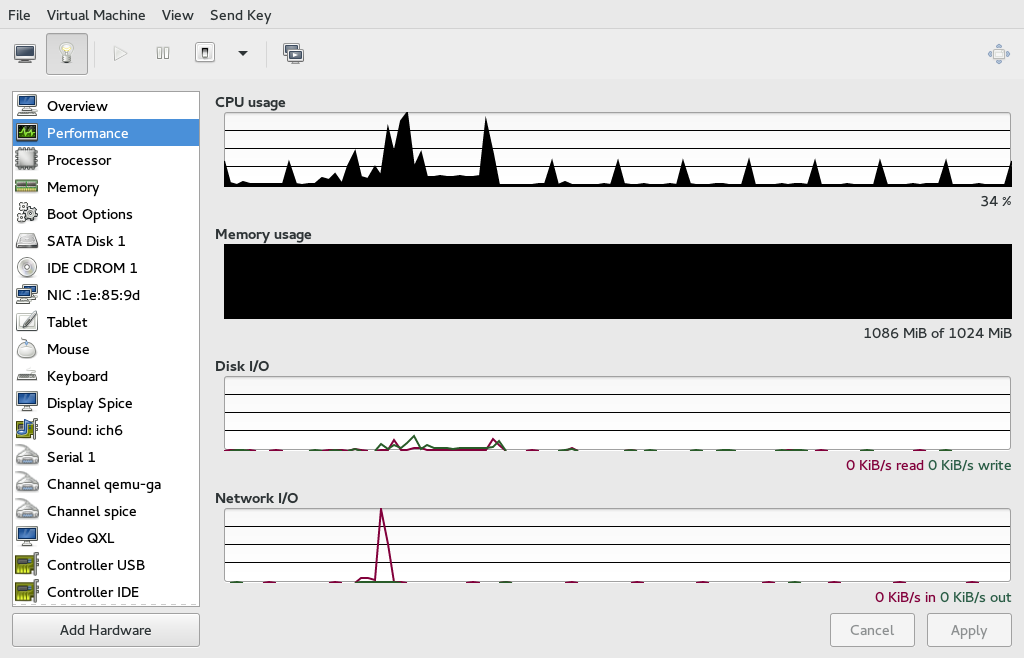

- 12.1 View of a VM Guest

- 12.2 Overview details

- 12.3 VM Guest Title and Description

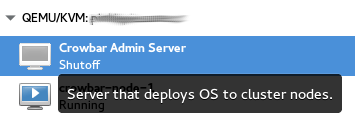

- 12.4 Performance

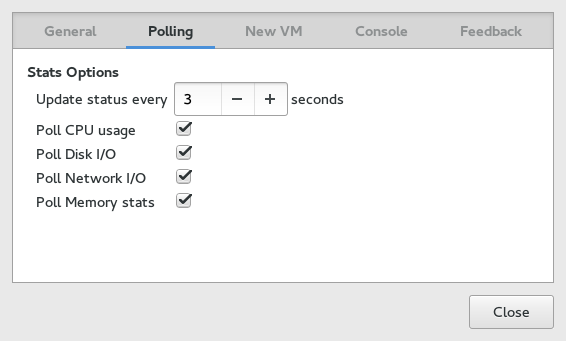

- 12.5 Statistics Charts

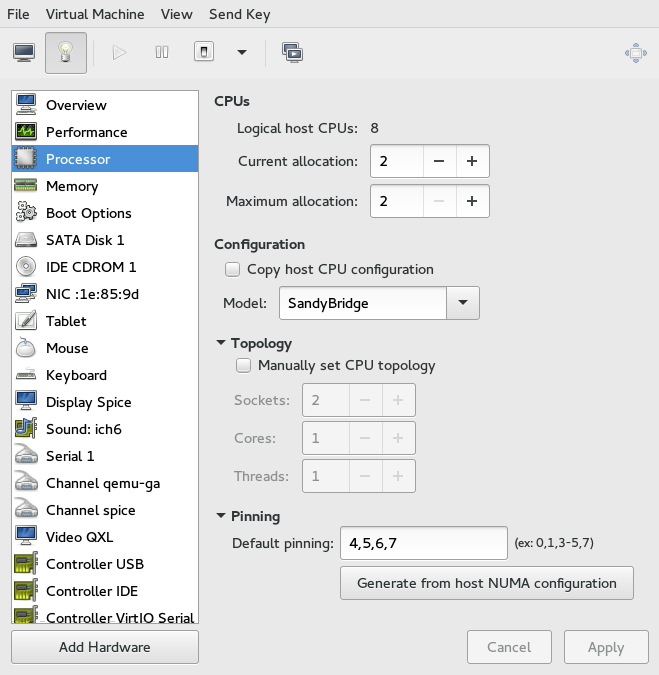

- 12.6 Processor View

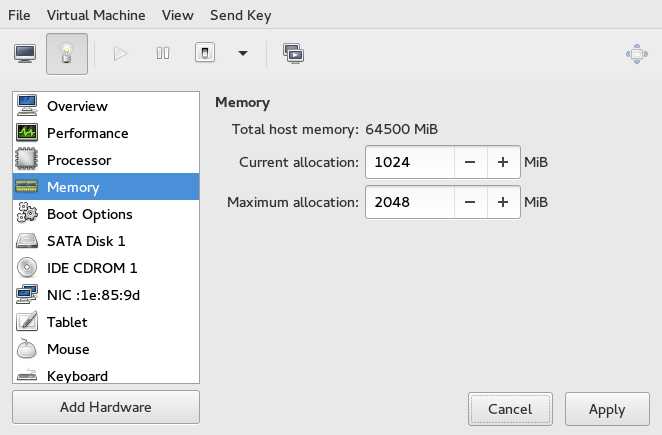

- 12.7 Memory View

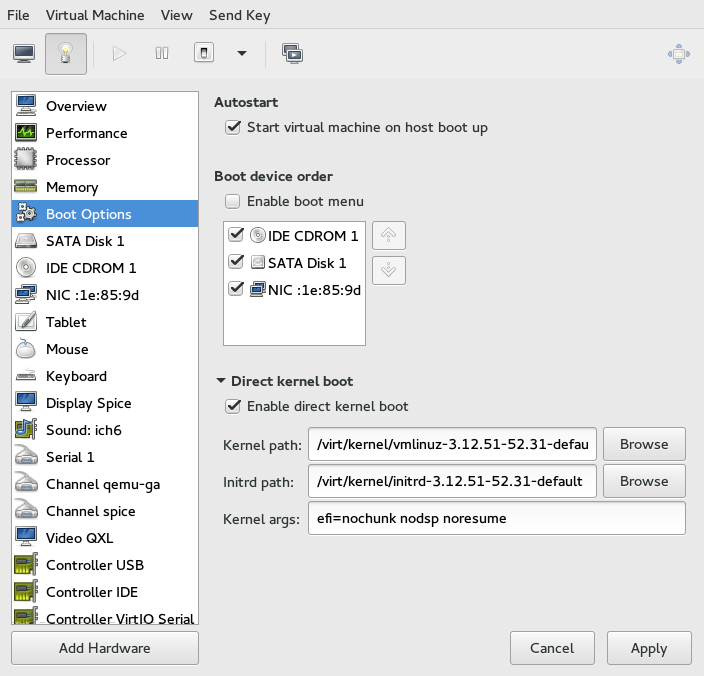

- 12.8 Boot Options

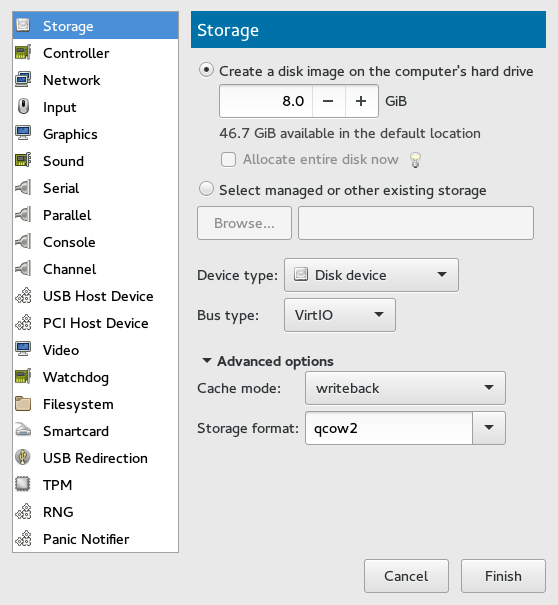

- 12.9 Add a New Storage

- 12.10 Add a New Controller

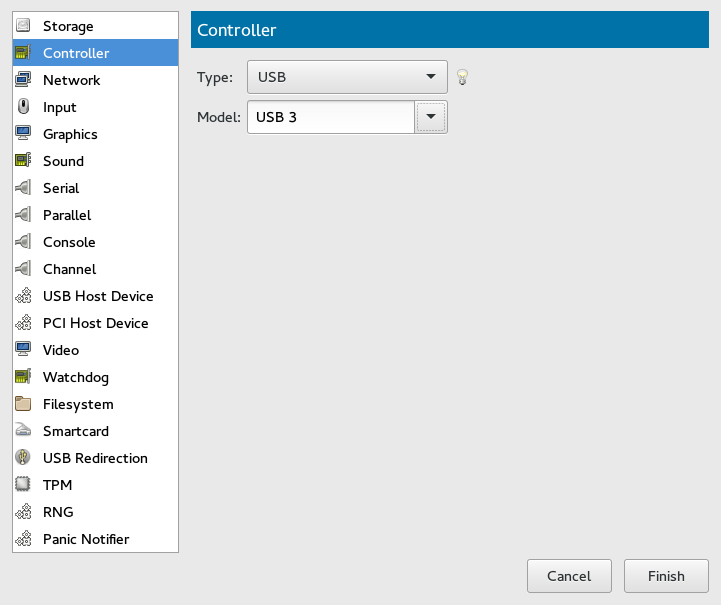

- 12.11 Add a New Controller

- 12.12 Add a New Input Device

- 12.13 Add a New Video Device

- 12.14 Add a New USB Redirector

- 12.15 Adding a PCI Device

- 12.16 Adding a USB Device

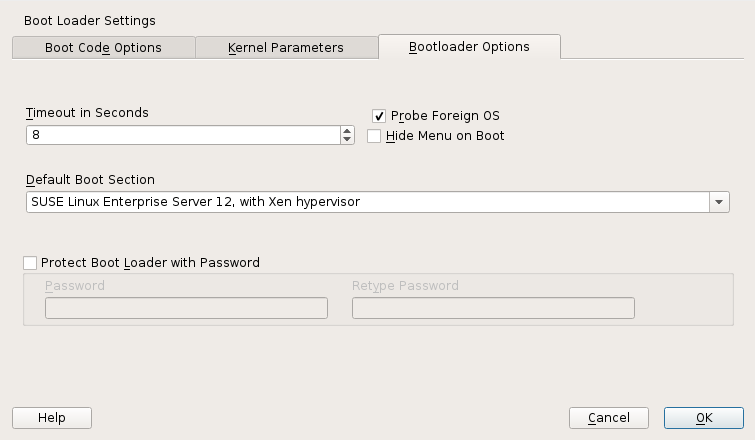

- 24.1 Boot Loader Settings

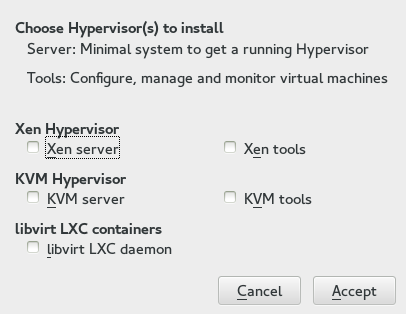

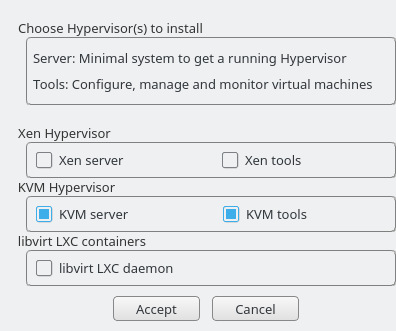

- 28.1 Installing the KVM Hypervisor and Tools

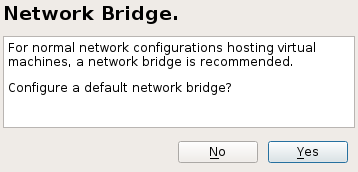

- 28.2 Network Bridge

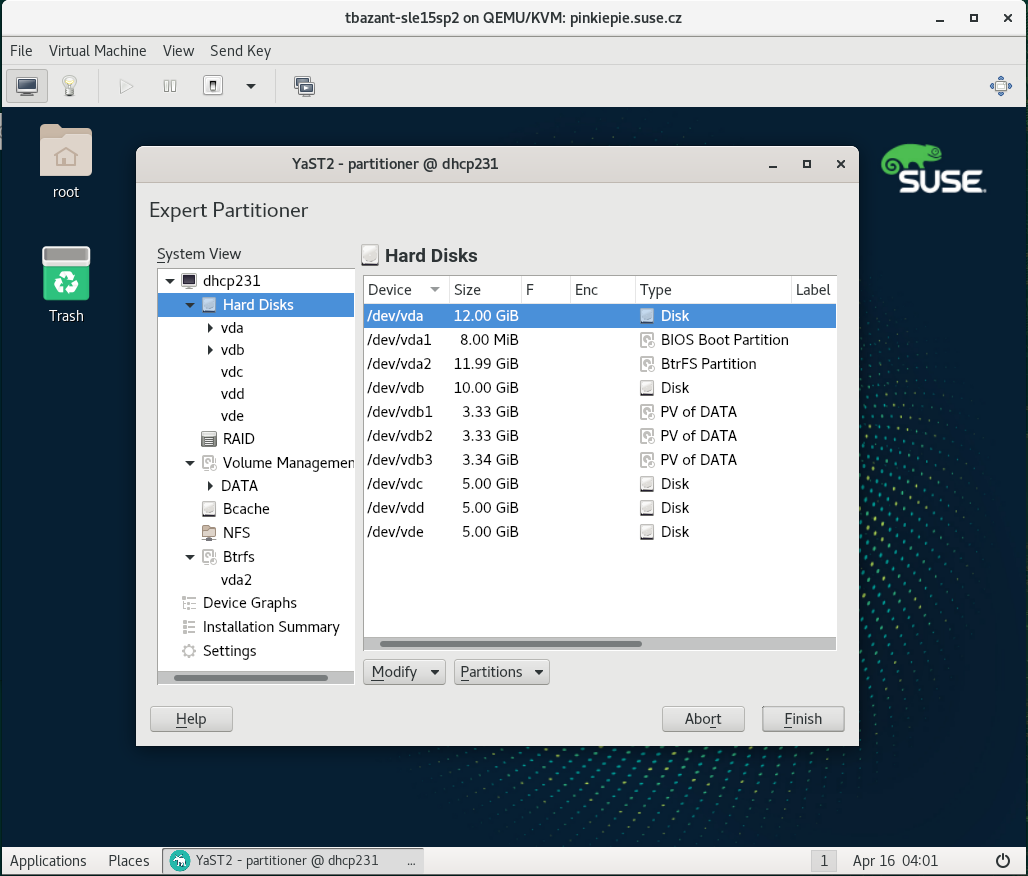

- 29.1 New 2 GB Partition in Guest YaST Partitioner

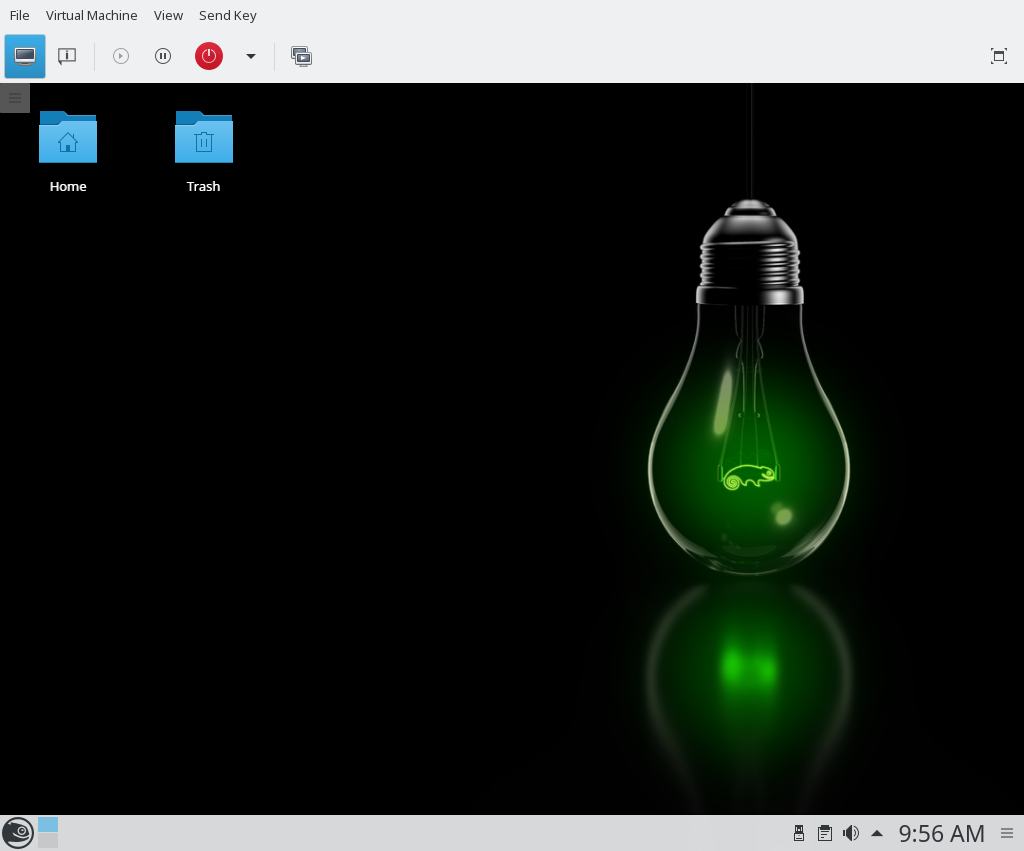

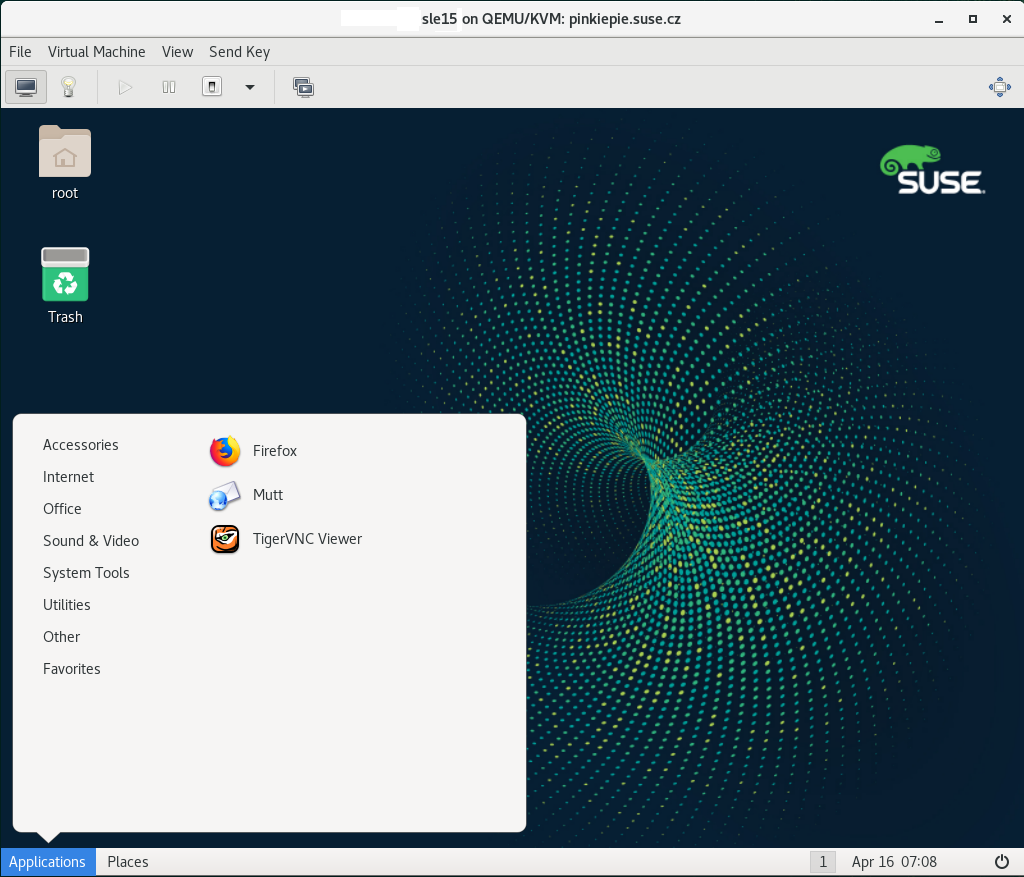

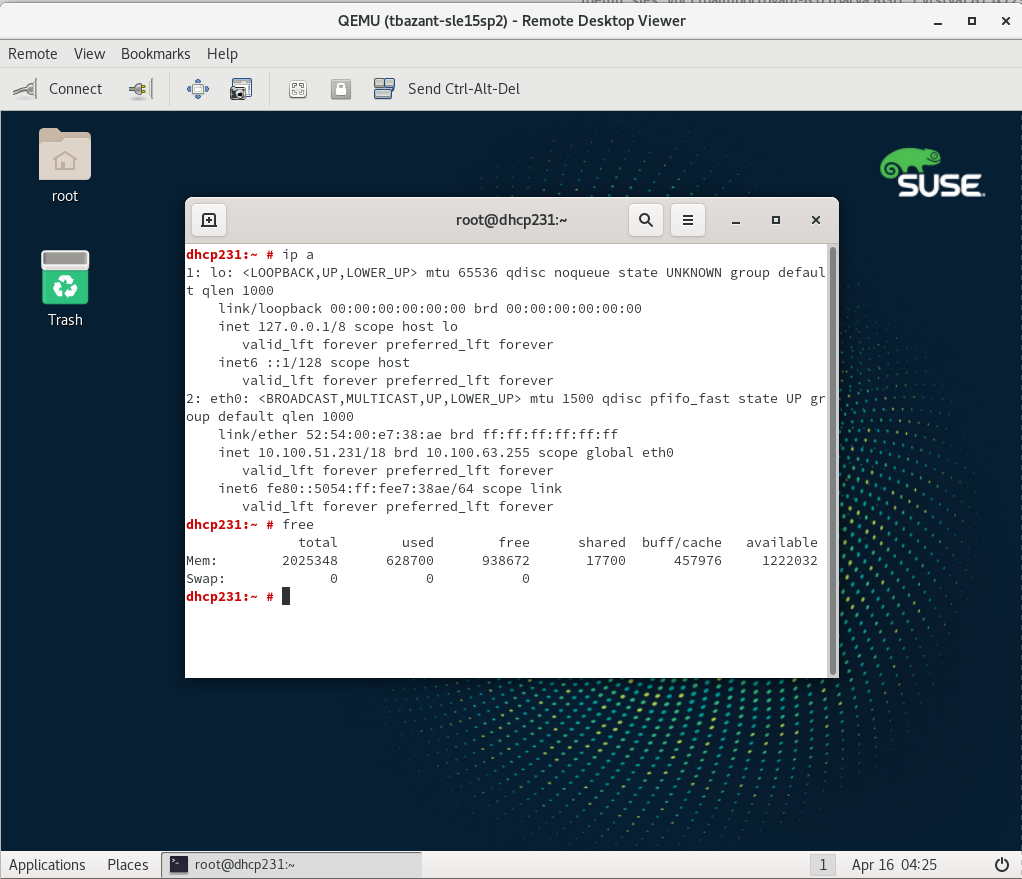

- 30.1 QEMU Window with SLES as VM Guest

- 30.2 QEMU VNC Session

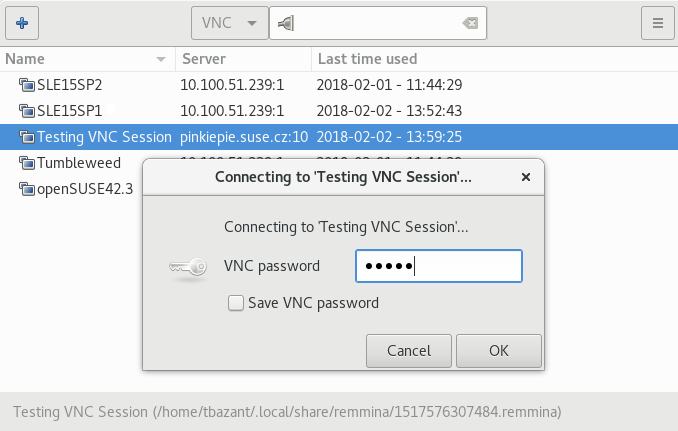

- 30.3 Authentication Dialog in Remmina

- 26.1 Xen Remote Storage

- B.1 Notation Conventions

- B.2 New Global Options

- B.3 Common Options

- B.4 Domain Management Removed Options

- B.5 USB Devices Management Removed Options

- B.6 CPU Management Removed options

- B.7 Other Options

- B.8

xlcreateChanged Options - B.9

xmcreateRemoved Options - B.10

xlcreateAdded Options - B.11

xlconsoleAdded Options - B.12

xminfoRemoved Options - B.13

xmdump-coreRemoved Options - B.14

xmlistRemoved Options - B.15

xllistAdded Options - B.16

xlmem-*Changed Options - B.17

xmmigrateRemoved Options - B.18

xlmigrateAdded Options - B.19

xmrebootRemoved Options - B.20

xlrebootAdded Options - B.21

xlsaveAdded Options - B.22

xlrestoreAdded Options - B.23

xmshutdownRemoved Options - B.24

xlshutdownAdded Options - B.25

xltriggerChanged Options - B.26

xmsched-creditRemoved Options - B.27

xlsched-creditAdded Options - B.28

xmsched-credit2Removed Options - B.29

xlsched-credit2Added Options - B.30

xmsched-sedfRemoved Options - B.31

xlsched-sedfAdded Options - B.32

xmcpupool-listRemoved Options - B.33

xmcpupool-createRemoved Options - B.34

xlpci-detachAdded Options - B.35

xmblock-listRemoved Options - B.36 Other Options

- B.37 Network Options

- B.38

xlnetwork-attachRemoved Options - B.39 New Options

- 7.1 Loading Kernel and Initrd from HTTP Server

- 7.2 Example of a

virt-installcommand line - 8.1 Typical Output of

kvm_stat - 11.1 NAT Based Network

- 11.2 Routed Network

- 11.3 Isolated Network

- 11.4 Using an Existing Bridge on VM Host Server

- 13.1 Example XML Configuration File

- 21.1 Guest Domain Configuration File for SLED 12:

/etc/xen/sled12.cfg - 28.1 Exporting Host's File System with VirtFS

- 30.1 Restricted User-mode Networking

- 30.2 User-mode Networking with Custom IP Range

- 30.3 User-mode Networking with Network-boot and TFTP

- 30.4 User-mode Networking with Host Port Forwarding

- 30.5 Password Authentication

- 30.6 x509 Certificate Authentication

- 30.7 x509 Certificate and Password Authentication

- 30.8 SASL Authentication

- B.1 Converting Xen Domain Configuration to

libvirt

Copyright © 2006– 2020 SUSE LLC and contributors. All rights reserved.

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.2 or (at your option) version 1.3; with the Invariant Section being this copyright notice and license. A copy of the license version 1.2 is included in the section entitled “GNU Free Documentation License”.

For SUSE trademarks, see https://www.suse.com/company/legal/. All other third-party trademarks are the property of their respective owners. Trademark symbols (®, ™ etc.) denote trademarks of SUSE and its affiliates. Asterisks (*) denote third-party trademarks.

All information found in this book has been compiled with utmost attention to detail. However, this does not guarantee complete accuracy. Neither SUSE LLC, its affiliates, the authors nor the translators shall be held liable for possible errors or the consequences thereof.

About This Manual #Edit source

This manual offers an introduction to setting up and managing

virtualization with KVM (Kernel-based Virtual Machine), Xen, and

Linux Containers (LXC) on openSUSE Leap. The first part introduces the

different virtualization solutions by describing their requirements, their

installations and SUSE's support status. The second part deals with

managing VM Guests and VM Host Servers with libvirt. The following

parts describe various administration tasks and practices and the last

three parts deal with hypervisor-specific topics.

1 Available Documentation #Edit source

Note: Online Documentation and Latest Updates

Documentation for our products is available at http://doc.opensuse.org/, where you can also find the latest updates, and browse or download the documentation in various formats. The latest documentation updates are usually available in the English version of the documentation.

The following documentation is available for this product:

- Book “Start-Up”

This manual will see you through your initial contact with openSUSE® Leap. Check out the various parts of this manual to learn how to install, use and enjoy your system.

- Book “Reference”

Covers system administration tasks like maintaining, monitoring and customizing an initially installed system.

- Virtualization Guide

Describes virtualization technology in general, and introduces libvirt—the unified interface to virtualization—and detailed information on specific hypervisors.

- Book “AutoYaST Guide”

AutoYaST is a system for unattended mass deployment of openSUSE Leap systems using an AutoYaST profile containing installation and configuration data. The manual guides you through the basic steps of auto-installation: preparation, installation, and configuration.

- Book “Security and Hardening Guide”

Introduces basic concepts of system security, covering both local and network security aspects. Shows how to use the product inherent security software like AppArmor, SELinux, or the auditing system that reliably collects information about any security-relevant events. Supports the administrator with security-related choices and decisions in installing and setting up a secure SUSE Linux Enterprise Server and additional processes to further secure and harden that installation.

- Book “System Analysis and Tuning Guide”

An administrator's guide for problem detection, resolution and optimization. Find how to inspect and optimize your system by means of monitoring tools and how to efficiently manage resources. Also contains an overview of common problems and solutions and of additional help and documentation resources.

- Book “GNOME User Guide”

Introduces the GNOME desktop of openSUSE Leap. It guides you through using and configuring the desktop and helps you perform key tasks. It is intended mainly for end users who want to make efficient use of GNOME as their default desktop.

The release notes for this product are available at https://www.suse.com/releasenotes/.

2 Giving Feedback #Edit source

Your feedback and contributions to this documentation are welcome! Several channels are available:

- Bug Reports

Report issues with the documentation at https://bugzilla.opensuse.org/. To simplify this process, you can use the links next to headlines in the HTML version of this document. These preselect the right product and category in Bugzilla and add a link to the current section. You can start typing your bug report right away. A Bugzilla account is required.

- Contributions

To contribute to this documentation, use the links next to headlines in the HTML version of this document. They take you to the source code on GitHub, where you can open a pull request. A GitHub account is required.

For more information about the documentation environment used for this documentation, see the repository's README.

Alternatively, you can report errors and send feedback concerning the documentation to <doc-team@suse.com>. Make sure to include the document title, the product version and the publication date of the documentation. Refer to the relevant section number and title (or include the URL) and provide a concise description of the problem.

- Help

If you need further help on openSUSE Leap, see https://en.opensuse.org/Portal:Support.

3 Documentation Conventions #Edit source

The following notices and typographical conventions are used in this documentation:

/etc/passwd: directory names and file namesPLACEHOLDER: replace PLACEHOLDER with the actual value

PATH: the environment variable PATHls,--help: commands, options, and parametersuser: users or groupspackage name : name of a package

Alt, Alt–F1: a key to press or a key combination; keys are shown in uppercase as on a keyboard

, › : menu items, buttons

Dancing Penguins (Chapter Penguins, ↑Another Manual): This is a reference to a chapter in another manual.

Commands that must be run with

rootprivileges. Often you can also prefix these commands with thesudocommand to run them as non-privileged user.root #commandtux >sudocommandCommands that can be run by non-privileged users.

tux >commandNotices

Warning: Warning Notice

Vital information you must be aware of before proceeding. Warns you about security issues, potential loss of data, damage to hardware, or physical hazards.

Important: Important Notice

Important information you should be aware of before proceeding.

Note: Note Notice

Additional information, for example about differences in software versions.

Tip: Tip Notice

Helpful information, like a guideline or a piece of practical advice.

Part I Introduction #Edit source

- 1 Virtualization Technology

Virtualization is a technology that provides a way for a machine (Host) to run another operating system (guest virtual machines) on top of the host operating system.

- 2 Introduction to Xen Virtualization

This chapter introduces and explains the components and technologies you need to understand to set up and manage a Xen-based virtualization environment.

- 3 Introduction to KVM Virtualization

- 4 Virtualization Tools

libvirtis a library that provides a common API for managing popular virtualization solutions, among them KVM, LXC, and Xen. The library provides a normalized management API for these virtualization solutions, allowing a stable, cross-hypervisor interface for higher-level management tools. The library also provides APIs for management of virtual networks and storage on the VM Host Server. The configuration of each VM Guest is stored in an XML file.With

libvirtyou can also manage your VM Guests remotely. It supports TLS encryption, x509 certificates and authentication with SASL. This enables managing VM Host Servers centrally from a single workstation, alleviating the need to access each VM Host Server individually.Using the

libvirt-based tools is the recommended way of managing VM Guests. Interoperability betweenlibvirtandlibvirt-based applications has been tested and is an essential part of SUSE's support stance.- 5 Installation of Virtualization Components

Depending on the scope of the installation, none of the virtualization tools may be installed on your system. They will be automatically installed when configuring the hypervisor with the YaST module Virtualization › Install Hypervisor and Tools. In case this module is not available in YaST, install…

1 Virtualization Technology #Edit source

Virtualization is a technology that provides a way for a machine (Host) to run another operating system (guest virtual machines) on top of the host operating system.

1.1 Overview #Edit source

openSUSE Leap includes the latest open source virtualization technologies, Xen and KVM. With these hypervisors, openSUSE Leap can be used to provision, de-provision, install, monitor and manage multiple virtual machines (VM Guests) on a single physical system (for more information see Hypervisor).

Out of the box, openSUSE Leap can create virtual machines running both modified, highly tuned, paravirtualized operating systems and fully virtualized unmodified operating systems. Full virtualization allows the guest OS to run unmodified and requires the presence of AMD64/Intel 64 processors which support either Intel* Virtualization Technology (Intel VT) or AMD* Virtualization (AMD-V).

The primary component of the operating system that enables virtualization is a hypervisor (or virtual machine manager), which is a layer of software that runs directly on server hardware. It controls platform resources, sharing them among multiple VM Guests and their operating systems by presenting virtualized hardware interfaces to each VM Guest.

openSUSE is a Linux server operating system that offers two types of hypervisors: Xen and KVM.

Both hypervisors support virtualization on the AMD64/Intel 64 architecture. For the POWER architecture, KVM is supported. Both Xen and KVM support full virtualization mode. In addition, Xen supports paravirtualized mode, and you can run both paravirtualized and fully virtualized guests on the same host.

openSUSE Leap with Xen or KVM acts as a virtualization host server (VHS) that supports VM Guests with its own guest operating systems. The SUSE VM Guest architecture consists of a hypervisor and management components that constitute the VHS, which runs many application-hosting VM Guests.

In Xen, the management components run in a privileged VM Guest often called Dom0. In KVM, where the Linux kernel acts as the hypervisor, the management components run directly on the VHS.

1.2 Virtualization Capabilities #Edit source

Virtualization design provides many capabilities to your organization. Virtualization of operating systems is used in many computing areas:

Server consolidation: Many servers can be replaced by one big physical server, so hardware is consolidated, and Guest Operating Systems are converted to virtual machine. It provides the ability to run legacy software on new hardware.

Isolation: guest operating system can be fully isolated from the Host running it. So if the virtual machine is corrupted, the Host system is not harmed.

Migration: A process to move a running virtual machine to another physical machine. Live migration is an extended feature that allows this move without disconnection of the client or the application.

Disaster recovery: Virtualized guests are less dependent on the hardware, and the Host server provides snapshot features to be able to restore a known running system without any corruption.

Dynamic load balancing: A migration feature that brings a simple way to load-balance your service across your infrastructure.

1.3 Virtualization Benefits #Edit source

Virtualization brings a lot of advantages while providing the same service as a hardware server.

First, it reduces the cost of your infrastructure. Servers are mainly used to provide a service to a customer, and a virtualized operating system can provide the same service, with:

Less hardware: You can run several operating system on one host, so all hardware maintenance will be reduced.

Less power/cooling: Less hardware means you do not need to invest more in electric power, backup power, and cooling if you need more service.

Save space: Your data center space will be saved because you do not need more hardware servers (less servers than service running).

Less management: Using a VM Guest simplifies the administration of your infrastructure.

Agility and productivity: Virtualization provides migration capabilities, live migration and snapshots. These features reduce downtime, and bring an easy way to move your service from one place to another without any service interruption.

1.4 Virtualization Modes #Edit source

Guest operating systems are hosted on virtual machines in either full virtualization (FV) mode or paravirtual (PV) mode. Each virtualization mode has advantages and disadvantages.

Full virtualization mode lets virtual machines run unmodified operating systems, such as Windows* Server 2003. It can use either Binary Translation or hardware-assisted virtualization technology, such as AMD* Virtualization or Intel* Virtualization Technology. Using hardware assistance allows for better performance on processors that support it.

To be able to run under paravirtual mode, guest operating systems usually need to be modified for the virtualization environment. However, operating systems running in paravirtual mode have better performance than those running under full virtualization.

Operating systems currently modified to run in paravirtual mode are called paravirtualized operating systems and include openSUSE Leap and NetWare® 6.5 SP8.

1.5 I/O Virtualization #Edit source

VM Guests not only share CPU and memory resources of the host system, but also the I/O subsystem. Because software I/O virtualization techniques deliver less performance than bare metal, hardware solutions that deliver almost “native” performance have been developed recently. openSUSE Leap supports the following I/O virtualization techniques:

- Full Virtualization

Fully Virtualized (FV) drivers emulate widely supported real devices, which can be used with an existing driver in the VM Guest. The guest is also called Hardware Virtual Machine (HVM). Since the physical device on the VM Host Server may differ from the emulated one, the hypervisor needs to process all I/O operations before handing them over to the physical device. Therefore all I/O operations need to traverse two software layers, a process that not only significantly impacts I/O performance, but also consumes CPU time.

- Paravirtualization

Paravirtualization (PV) allows direct communication between the hypervisor and the VM Guest. With less overhead involved, performance is much better than with full virtualization. However, paravirtualization requires either the guest operating system to be modified to support the paravirtualization API or paravirtualized drivers.

- PVHVM

This type of virtualization enhances HVM (see Full Virtualization) with paravirtualized (PV) drivers, and PV interrupt and timer handling.

- VFIO

VFIO stands for Virtual Function I/O and is a new user-level driver framework for Linux. It replaces the traditional KVM PCI Pass-Through device assignment. The VFIO driver exposes direct device access to user space in a secure memory (IOMMU) protected environment. With VFIO, a VM Guest can directly access hardware devices on the VM Host Server (pass-through), avoiding performance issues caused by emulation in performance critical paths. This method does not allow to share devices—each device can only be assigned to a single VM Guest. VFIO needs to be supported by the VM Host Server CPU, chipset and the BIOS/EFI.

Compared to the legacy KVM PCI device assignment, VFIO has the following advantages:

Resource access is compatible with secure boot.

Device is isolated and its memory access protected.

Offers a user space device driver with more flexible device ownership model.

Is independent of KVM technology, and not bound to x86 architecture only.

As of openSUSE 42.2, the USB and PCI Pass-through methods of device assignment are considered deprecated and were superseded by the VFIO model.

- SR-IOV

The latest I/O virtualization technique, Single Root I/O Virtualization SR-IOV combines the benefits of the aforementioned techniques—performance and the ability to share a device with several VM Guests. SR-IOV requires special I/O devices, that are capable of replicating resources so they appear as multiple separate devices. Each such “pseudo” device can be directly used by a single guest. However, for network cards for example the number of concurrent queues that can be used is limited, potentially reducing performance for the VM Guest compared to paravirtualized drivers. On the VM Host Server, SR-IOV must be supported by the I/O device, the CPU and chipset, the BIOS/EFI and the hypervisor—for setup instructions see Section 12.12, “Assigning a Host PCI Device to a VM Guest”.

Important: Requirements for VFIO and SR-IOV

To be able to use the VFIO and SR-IOV features, the VM Host Server needs to fulfill the following requirements:

IOMMU needs to be enabled in the BIOS/EFI.

For Intel CPUs, the kernel parameter

intel_iommu=onneeds to be provided on the kernel command line. For more information, see Book “Reference”, Chapter 12 “The Boot Loader GRUB 2”, Section 12.3.3.2 “ Tab”.The VFIO infrastructure needs to be available. This can be achieved by loading the kernel module

vfio_pci. For more information, see Book “Reference”, Chapter 10 “ThesystemdDaemon”, Section 10.6.4 “Loading Kernel Modules”.

2 Introduction to Xen Virtualization #Edit source

This chapter introduces and explains the components and technologies you need to understand to set up and manage a Xen-based virtualization environment.

2.1 Basic Components #Edit source

The basic components of a Xen-based virtualization environment are the Xen hypervisor, the Dom0, any number of other VM Guests, and the tools, commands, and configuration files that let you manage virtualization. Collectively, the physical computer running all these components is called a VM Host Server because together these components form a platform for hosting virtual machines.

- The Xen Hypervisor

The Xen hypervisor, sometimes simply called a virtual machine monitor, is an open source software program that coordinates the low-level interaction between virtual machines and physical hardware.

- The Dom0

The virtual machine host environment, also called Dom0 or controlling domain, is composed of several components, such as:

openSUSE Leap provides a graphical and a command line environment to manage the virtual machine host components and its virtual machines.

Note

The term “Dom0” refers to a special domain that provides the management environment. This may be run either in graphical or in command line mode.

The xl tool stack based on the xenlight library (libxl). Use it to manage Xen guest domains.

QEMU—an open source software that emulates a full computer system, including a processor and various peripherals. It provides the ability to host operating systems in both full virtualization or paravirtualization mode.

- Xen-Based Virtual Machines

A Xen-based virtual machine, also called a VM Guest or DomU, consists of the following components:

At least one virtual disk that contains a bootable operating system. The virtual disk can be based on a file, partition, volume, or other type of block device.

A configuration file for each guest domain. It is a text file following the syntax described in the manual page

man 5 xl.conf.Several network devices, connected to the virtual network provided by the controlling domain.

- Management Tools, Commands, and Configuration Files

There is a combination of GUI tools, commands, and configuration files to help you manage and customize your virtualization environment.

2.2 Xen Virtualization Architecture #Edit source

The following graphic depicts a virtual machine host with four virtual machines. The Xen hypervisor is shown as running directly on the physical hardware platform. Note that the controlling domain is also a virtual machine, although it has several additional management tasks compared to all the other virtual machines.

Figure 2.1: Xen Virtualization Architecture #

On the left, the virtual machine host’s Dom0 is shown running the openSUSE Leap operating system. The two virtual machines shown in the middle are running paravirtualized operating systems. The virtual machine on the right shows a fully virtual machine running an unmodified operating system, such as the latest version of Microsoft Windows/Server.

3 Introduction to KVM Virtualization #Edit source

3.1 Basic Components #Edit source

KVM is a full virtualization solution for the AMD64/Intel 64 architecture supporting hardware virtualization.

VM Guests (virtual machines), virtual storage, and virtual networks

can be managed with QEMU tools directly, or with the

libvirt-based stack. The QEMU tools include

qemu-system-ARCH, the QEMU monitor,

qemu-img, and qemu-ndb. A

libvirt-based stack includes libvirt itself, along with

libvirt-based applications such as virsh,

virt-manager, virt-install, and

virt-viewer.

3.2 KVM Virtualization Architecture #Edit source

This full virtualization solution consists of two main components:

A set of kernel modules (

kvm.ko,kvm-intel.ko, andkvm-amd.ko) that provides the core virtualization infrastructure and processor-specific drivers.A user space program (

qemu-system-ARCH) that provides emulation for virtual devices and control mechanisms to manage VM Guests (virtual machines).

The term KVM more properly refers to the kernel level virtualization functionality, but is in practice more commonly used to refer to the user space component.

Figure 3.1: KVM Virtualization Architecture #

Note: Hyper-V Emulation Support

QEMU can provide certain Hyper-V hypercalls for Windows* guests to partly emulate a Hyper-V environment. This can be used to achieve better behavior for Windows* guests that are Hyper-V enabled.

4 Virtualization Tools #Edit source

libvirt is a library that provides a common API for managing popular

virtualization solutions, among them KVM, LXC, and Xen. The library

provides a normalized management API for these virtualization solutions,

allowing a stable, cross-hypervisor interface for higher-level management

tools. The library also provides APIs for management of virtual networks

and storage on the VM Host Server. The configuration of each VM Guest is stored

in an XML file.

With libvirt you can also manage your VM Guests remotely. It supports

TLS encryption, x509 certificates and authentication with SASL. This

enables managing VM Host Servers centrally from a single workstation,

alleviating the need to access each VM Host Server individually.

Using the libvirt-based tools is the recommended way of managing

VM Guests. Interoperability between libvirt and libvirt-based

applications has been tested and is an essential part of SUSE's support

stance.

4.1 Virtualization Console Tools #Edit source

The following libvirt-based tools for the command line are available on openSUSE Leap:

virsh(Package: libvirt-client)A command line tool to manage VM Guests with similar functionality as the Virtual Machine Manager. Allows you to change a VM Guest's status (start, stop, pause, etc.), to set up new guests and devices, or to edit existing configurations.

virshis also useful to script VM Guest management operations.virshtakes the first argument as a command and further arguments as options to this command:virsh [-c URI] COMMAND DOMAIN-ID [OPTIONS]

Like

zypper,virshcan also be called without a command. In this case it starts a shell waiting for your commands. This mode is useful when having to run subsequent commands:~> virsh -c qemu+ssh://wilber@mercury.example.com/system Enter passphrase for key '/home/wilber/.ssh/id_rsa': Welcome to virsh, the virtualization interactive terminal. Type: 'help' for help with commands 'quit' to quit virsh # hostname mercury.example.comvirt-install(Package: virt-install)A command line tool for creating new VM Guests using the

libvirtlibrary. It supports graphical installations via VNC or SPICE protocols. Given suitable command line arguments,virt-installcan run completely unattended. This allows for easy automation of guest installs.virt-installis the default installation tool used by the Virtual Machine Manager.

4.2 Virtualization GUI Tools #Edit source

The following libvirt-based graphical tools are available on openSUSE Leap. All tools are provided by packages carrying the tool's name.

- Virtual Machine Manager (Package: virt-manager)

The Virtual Machine Manager is a desktop tool for managing VM Guests. It provides the ability to control the lifecycle of existing machines (start/shutdown, pause/resume, save/restore) and create new VM Guests. It allows managing various types of storage and virtual networks. It provides access to the graphical console of VM Guests with a built-in VNC viewer and can be used to view performance statistics.

virt-managersupports connecting to a locallibvirtd, managing a local VM Host Server, or a remotelibvirtdmanaging a remote VM Host Server.To start the Virtual Machine Manager, enter

virt-managerat the command prompt.

Note

To disable automatic USB device redirection for VM Guest using spice, either launch

virt-managerwith the--spice-disable-auto-usbredirparameter or run the following command to persistently change the default behavior:tux >dconf write /org/virt-manager/virt-manager/console/auto-redirect falsevirt-viewer(Package: virt-viewer)A viewer for the graphical console of a VM Guest. It uses SPICE (configured by default on the VM Guest) or VNC protocols and supports TLS and x509 certificates. VM Guests can be accessed by name, ID, or UUID. If the guest is not already running, the viewer can be told to wait until the guest starts, before attempting to connect to the console.

virt-vieweris not installed by default and is available after installing the packagevirt-viewer.

Note

To disable automatic USB device redirection for VM Guest using spice, add an empty filter using the

--spice-usbredir-auto-redirect-filter=''parameter.yast2 vm(Package: yast2-vm)A YaST module that simplifies the installation of virtualization tools and can set up a network bridge:

5 Installation of Virtualization Components #Edit source

Depending on the scope of the installation, none of the virtualization tools may be installed on your system. They will be automatically installed when configuring the hypervisor with the YaST module › . In case this module is not available in YaST, install the package yast2-vm.

5.1 Installing KVM #Edit source

To install KVM and KVM tools, proceed as follows:

Verify that the yast2-vm package is installed. This package is YaST's configuration tool that simplifies the installation of virtualization hypervisors.

Start YaST and choose › .

Select for a minimal installation of QEMU tools. Select if a

libvirt-based management stack is also desired. Confirm with .To enable normal networking for the VM Guest, using a network bridge is recommended. YaST offers to automatically configure a bridge on the VM Host Server. Agree to do so by choosing , otherwise choose .

After the setup has been finished, you can start setting up VM Guests. Rebooting the VM Host Server is not required.

5.2 Installing Xen #Edit source

To install Xen and Xen tools, proceed as follows:

Start YaST and choose › .

Select for a minimal installation of Xen tools. Select if a

libvirt-based management stack is also desired. Confirm with .To enable normal networking for the VM Guest, using a network bridge is recommended. YaST offers to automatically configure a bridge on the VM Host Server. Agree to do so by choosing , otherwise choose .

After the setup has been finished, you need to reboot the machine with the Xen kernel.

Tip: Default Boot Kernel

If everything works as expected, change the default boot kernel with YaST and make the Xen-enabled kernel the default. For more information about changing the default kernel, see Book “Reference”, Chapter 12 “The Boot Loader GRUB 2”, Section 12.3 “Configuring the Boot Loader with YaST”.

5.3 Installing Containers #Edit source

To install containers, proceed as follows:

Start YaST and choose › .

Select and confirm with .

5.4 Patterns #Edit source

It is possible using Zypper and patterns to install virtualization

packages. Run the command zypper in -t pattern

PATTERN. Available patterns are:

- KVM

kvm_server: sets up the KVM VM Host Server with QEMU tools for managementkvm_tools: installs thelibvirttools for managing and monitoring VM Guests

- Xen

xen_server: sets up the Xen VM Host Server with Xen tools for managementxen_tools: installs thelibvirttools for managing and monitoring VM Guests

- Containers

There is no pattern for containers; install the libvirt-daemon-lxc package.

5.5 Installing UEFI Support #Edit source

UEFI support is provided by OVMF (Open Virtual Machine Firmware). To enable UEFI boot, first install the qemu-ovmf-x86_64 or qemu-uefi-aarch64 package.

The firmware used by virtual machines is auto-selected. The auto-selection

is based on the *.json files in the

qemu-ovmf-ARCH package. The

libvirt QEMU driver parses those files when loading so it knows the

capabilities of the various types of firmware. Then when the user selects the type

of firmware and any desired features (for example, support for secure boot),

libvirt will be able to find a firmware that satisfies the user's

requirements.

For example, to specify EFI with secure boot, use the following configuration:

<os firmware='efi'> <loader secure='yes'/> </os>

The qemu-ovmf-ARCH packages contain the following files:

root #rpm -ql qemu-ovmf-x86_64[...] /usr/share/qemu/ovmf-x86_64-ms-code.bin /usr/share/qemu/ovmf-x86_64-ms-vars.bin /usr/ddshare/qemu/ovmf-x86_64-ms.bin /usr/share/qemu/ovmf-x86_64-suse-code.bin /usr/share/qemu/ovmf-x86_64-suse-vars.bin /usr/share/qemu/ovmf-x86_64-suse.bin [...]

The *-code.bin files are the UEFI firmware files.

The *-vars.bin files are corresponding variable

store images that can be used as a template for a per-VM non-volatile

store. libvirt copies the specified vars

template to a per-VM path under

/var/lib/libvirt/qemu/nvram/ when first

creating the VM. Files without code or

vars in the name can be used as a single UEFI

image. They are not as useful since no UEFI variables persist

across power cycles of the VM.

The *-ms*.bin files contain Microsoft keys as

found on real hardware. Therefore, they are configured as the default in

libvirt. Likewise, the *-suse*.bin files

contain preinstalled SUSE keys. There is also a set

of files with no preinstalled keys.

For details, see Using UEFI and Secure Boot and http://www.linux-kvm.org/downloads/lersek/ovmf-whitepaper-c770f8c.txt.

5.6 Enable Support for Nested Virtualization in KVM #Edit source

Important: Technology Preview

KVM's nested virtualization is still a technology preview. It is provided for testing purposes and is not supported.

Nested guests are KVM guests run in a KVM guest. When describing nested guests, we will use the following virtualization layers:

- L0

A bare metal host running KVM.

- L1

A virtual machine running on L0. Because it can run another KVM, it is called a guest hypervisor.

- L2

A virtual machine running on L1. It is called a nested guest.

Nested virtualization has many advantages. You can benefit from it in the following scenarios:

Manage your own virtual machines directly with your hypervisor of choice in cloud environments.

Enable the live migration of hypervisors and their guest virtual machines as a single entity.

Use it for software development and testing.

To enable nesting temporarily, remove the module and reload it with the

nested KVM module parameter:

For Intel CPUs, run:

tux >sudomodprobe -r kvm_intel && modprobe kvm_intel nested=1For AMD CPUs, run:

tux >sudomodprobe -r kvm_amd && modprobe kvm_amd nested=1

To enable nesting permanently, enable the nested KVM

module parameter in the /etc/modprobe.d/kvm_*.conf file,

depending on your CPU:

For Intel CPUs, edit

/etc/modprobe.d/kvm_intel.confand add the following line:options kvm_intel nested=Y

For AMD CPUs, edit

/etc/modprobe.d/kvm_amd.confand add the following line:options kvm_amd nested=Y

When your L0 host is capable of nesting, you will be able to start an L1 guest in one of the following ways:

Use the

-cpu hostQEMU command line option.Add the

vmx(for Intel CPUs) or thesvm(for AMD CPUs) CPU feature to the-cpuQEMU command line option, which enables virtualization for the virtual CPU.

Part II Managing Virtual Machines with libvirt #Edit source

- 6 Starting and Stopping

libvirtd The communication between the virtualization solutions (KVM, Xen, LXC) and the libvirt API is managed by the daemon libvirtd. It needs to run on the VM Host Server. libvirt client applications such as virt-manager, possibly running on a remote machine, communicate with libvirtd running on the VM Hos…

- 7 Guest Installation

A VM Guest consists of an image containing an operating system and data files and a configuration file describing the VM Guest's virtual hardware resources. VM Guests are hosted on and controlled by the VM Host Server. This section provides generalized instructions for installing a VM Guest.

- 8 Basic VM Guest Management

Most management tasks, such as starting or stopping a VM Guest, can either be done using the graphical application Virtual Machine Manager or on the command line using

virsh. Connecting to the graphical console via VNC is only possible from a graphical user interface.- 9 Connecting and Authorizing

Managing several VM Host Servers, each hosting multiple VM Guests, quickly becomes difficult. One benefit of

libvirtis the ability to connect to several VM Host Servers at once, providing a single interface to manage all VM Guests and to connect to their graphical console.- 10 Managing Storage

When managing a VM Guest on the VM Host Server itself, you can access the complete file system of the VM Host Server to attach or create virtual hard disks or to attach existing images to the VM Guest. However, this is not possible when managing VM Guests from a remote host. For this reason, libvirt…

- 11 Managing Networks

This chapter describes common network configurations for a VM Host Server, including those supported natively by the VM Host Server and

libvirt. The configurations are valid for all hypervisors supported by openSUSE Leap, such as KVM or Xen.- 12 Configuring Virtual Machines with Virtual Machine Manager

Virtual Machine Manager's view offers in-depth information about the VM Guest's complete configuration and hardware equipment. Using this view, you can also change the guest configuration or add and modify virtual hardware. To access this view, open the guest's console in Virtual Machine Manager and either choose › from the menu, or click in the toolbar.

- 13 Configuring Virtual Machines with

virsh You can use

virshto configure virtual machines (VM) on the command line as an alternative to using the Virtual Machine Manager. Withvirsh, you can control the state of a VM, edit the configuration of a VM or even migrate a VM to another host. The following sections describe how to manage VMs by usingvirsh.- 14 Managing Virtual Machines with Vagrant

Vagrant is a tool that provides a unified workflow for the creation, deployment and management of virtual development environments. The following sections describe how to manage virtual machines by using Vagrant.

6 Starting and Stopping libvirtd #Edit source

The communication between the virtualization solutions (KVM, Xen, LXC)

and the libvirt API is managed by the daemon libvirtd. It needs to run

on the VM Host Server. libvirt client applications such as virt-manager, possibly

running on a remote machine, communicate with libvirtd running on the

VM Host Server, which services the request using native hypervisor APIs. Use the

following commands to start and stop libvirtd or check its status:

tux >sudosystemctl start libvirtdtux >sudosystemctl status libvirtd libvirtd.service - Virtualization daemon Loaded: loaded (/usr/lib/systemd/system/libvirtd.service; enabled) Active: active (running) since Mon 2014-05-12 08:49:40 EDT; 2s ago [...]tux >sudosystemctl stop libvirtdtux >sudosystemctl status libvirtd [...] Active: inactive (dead) since Mon 2014-05-12 08:51:11 EDT; 4s ago [...]

To automatically start libvirtd at boot time, either activate it using the

YaST module or by entering the following

command:

tux >sudosystemctl enable libvirtd

Important: Conflicting Services: libvirtd

and xendomains

If libvirtd fails to start,

check if the service xendomains is

loaded:

tux > systemctl is-active xendomains

active

If the command returns active, you need to stop

xendomains before you can

start the libvirtd daemon. If

you want libvirtd to also start

after rebooting, additionally prevent xendomains from starting automatically. Disable

the service:

tux >sudosystemctl stop xendomainstux >sudosystemctl disable xendomainstux >sudosystemctl start libvirtd

xendomains and libvirtd provide the same service and when used

in parallel may interfere with one another. As an example, xendomains may attempt to start a domU already

started by libvirtd.

7 Guest Installation #Edit source

A VM Guest consists of an image containing an operating system and data files and a configuration file describing the VM Guest's virtual hardware resources. VM Guests are hosted on and controlled by the VM Host Server. This section provides generalized instructions for installing a VM Guest.

Virtual machines have few if any requirements above those required to run the operating system. If the operating system has not been optimized for the virtual machine host environment, it can only run on hardware-assisted virtualization computer hardware, in full virtualization mode, and requires specific device drivers to be loaded. The hardware that is presented to the VM Guest depends on the configuration of the host.

You should be aware of any licensing issues related to running a single licensed copy of an operating system on multiple virtual machines. Consult the operating system license agreement for more information.

7.1 GUI-Based Guest Installation #Edit source

The wizard helps you through the steps required to create a virtual machine and install its operating system. There are two ways to start it: Within Virtual Machine Manager, either click or choose › . Alternatively, start YaST and choose › .

Start the wizard either from YaST or Virtual Machine Manager.

Choose an installation source—either a locally available media or a network installation source. If you want to set up your VM Guest from an existing image, choose .

On a VM Host Server running the Xen hypervisor, you can choose whether to install a paravirtualized or a fully virtualized guest. The respective option is available under . Depending on this choice, not all installation options may be available.

Depending on your choice in the previous step, you need to provide the following data:

Specify the path on the VM Host Server to an ISO image containing the installation data. If it is available as a volume in a libvirt storage pool, you can also select it using . For more information, see Chapter 10, Managing Storage.

Alternatively, choose a physical CD-ROM or DVD inserted in the optical drive of the VM Host Server.

Provide the pointing to the installation source. Valid URL prefixes are, for example,

ftp://,http://,https://, andnfs://.Under , provide a path to an auto-installation file (AutoYaST or Kickstart, for example) and kernel parameters. Having provided a URL, the operating system should be automatically detected correctly. If this is not the case, deselect and manually select the and .

When booting via PXE, you only need to provide the and the .

To set up the VM Guest from an existing image, you need to specify the path on the VM Host Server to the image. If it is available as a volume in a libvirt storage pool, you can also select it using . For more information, see Chapter 10, Managing Storage.

Choose the memory size and number of CPUs for the new virtual machine.

This step is omitted when is chosen in the first step.

Set up a virtual hard disk for the VM Guest. Either create a new disk image or choose an existing one from a storage pool (for more information, see Chapter 10, Managing Storage). If you choose to create a disk, a

qcow2image will be created. By default, it is stored under/var/lib/libvirt/images.Setting up a disk is optional. If you are running a live system directly from CD or DVD, for example, you can omit this step by deactivating .

On the last screen of the wizard, specify the name for the virtual machine. To be offered the possibility to review and make changes to the virtualized hardware selection, activate . Find options to specify the network device under .

Click .

(Optional) If you kept the defaults in the previous step, the installation will now start. If you selected , a VM Guest configuration dialog opens. For more information about configuring VM Guests, see Chapter 12, Configuring Virtual Machines with Virtual Machine Manager.

When you are done configuring, click .

Tip: Passing Key Combinations to Virtual Machines

The installation starts in a Virtual Machine Manager console window. Some key combinations, such as Ctrl–Alt–F1, are recognized by the VM Host Server but are not passed to the virtual machine. To bypass the VM Host Server, Virtual Machine Manager provides the “sticky key” functionality. Pressing Ctrl, Alt, or Shift three times makes the key sticky, then you can press the remaining keys to pass the combination to the virtual machine.

For example, to pass Ctrl–Alt–F2 to a Linux virtual machine, press Ctrl three times, then press Alt–F2. You can also press Alt three times, then press Ctrl–F2.

The sticky key functionality is available in the Virtual Machine Manager during and after installing a VM Guest.

7.2 Installing from the Command Line with virt-install #Edit source

virt-install is a command line tool that helps you create

new virtual machines using the libvirt library. It is useful if you cannot

use the graphical user interface, or need to automatize the process of

creating virtual machines.

virt-install is a complex script with a lot of command

line switches. The following are required. For more information, see the man

page of virt-install (1).

- General Options

--name VM_GUEST_NAME: Specify the name of the new virtual machine. The name must be unique across all guests known to the hypervisor on the same connection. It is used to create and name the guest’s configuration file and you can access the guest with this name fromvirsh. Alphanumeric and_-.:+characters are allowed.--memory REQUIRED_MEMORY: Specify the amount of memory to allocate for the new virtual machine in megabytes.--vcpus NUMBER_OF_CPUS: Specify the number of virtual CPUs. For best performance, the number of virtual processors should be less than or equal to the number of physical processors.

- Virtualization Type

--paravirt: Set up a paravirtualized guest. This is the default if the VM Host Server supports paravirtualization and full virtualization.--hvm: Set up a fully virtualized guest.--virt-type HYPERVISOR: Specify the hypervisor. Supported values arekvm,xen, orlxc.

- Guest Storage

Specify one of

--disk,--filesystemor--nodisksthe type of the storage for the new virtual machine. For example,--disk size=10creates 10 GB disk in the default image location for the hypervisor and uses it for the VM Guest.--filesystem /export/path/on/vmhostspecifies the directory on the VM Host Server to be exported to the guest. And--nodiskssets up a VM Guest without a local storage (good for Live CDs).- Installation Method

Specify the installation method using one of

--location,--cdrom,--pxe,--import, or--boot.- Accessing the Installation

Use the

--graphics VALUEoption to specify how to access the installation. openSUSE Leap supports the valuesvncornone.If using VNC,

virt-installtries to launchvirt-viewer. If it is not installed or cannot be run, connect to the VM Guest manually with you preferred viewer. To explicitly preventvirt-installfrom launching the viewer use--noautoconsole. To define a password for accessing the VNC session, use the following syntax:--graphics vnc,password=PASSWORD.In case you are using

--graphics none, you can access the VM Guest through operating system supported services, such as SSH or VNC. Refer to the operating system installation manual on how to set up these services in the installation system.- Passing Kernel and Initrd Files

It is possible to directly specify the Kernel and Initrd of the installer, for example from a network source.

To pass additional boot parameters, use the

--extra-argsoption. This can be used to specify a network configuration. For details, see https://en.opensuse.org/SDB:Linuxrc.Example 7.1: Loading Kernel and Initrd from HTTP Server #

root #virt-install--location \ "http://download.opensuse.org/pub/opensuse/distribution/leap/15.0/repo/oss" \ --extra-args="textmode=1" --name "Leap15" --memory 2048 --virt-type kvm \ --connect qemu:///system --disk size=10 --graphics vnc --network \ network=vnet_nated- Enabling the Console

By default, the console is not enabled for new virtual machines installed using

virt-install. To enable it, use--extra-args="console=ttyS0 textmode=1"as in the following example:tux >virt-install --virt-type kvm --name sles12 --memory 1024 \ --disk /var/lib/libvirt/images/disk1.qcow2 --os-variant sles12 --extra-args="console=ttyS0 textmode=1" --graphics noneAfter the installation finishes, the

/etc/default/grubfile in the VM image will be updated with theconsole=ttyS0option on theGRUB_CMDLINE_LINUX_DEFAULTline.- Using UEFI and Secure Boot

Install OVMF as described in Section 5.5, “Installing UEFI Support”. Then add the

--boot uefioption to thevirt-installcommand.Secure boot will be used automatically when setting up a new VM with OVMF. To use a specific firmware, use

--boot loader=/usr/share/qemu/ovmf-VERSION.bin. Replace VERSION with the file you need.

Example 7.2: Example of a virt-install command line #

The following command line example creates a new SUSE Linux Enterprise Desktop 12 virtual machine with a virtio accelerated disk and network card. It creates a new 10 GB qcow2 disk image as a storage, the source installation media being the host CD-ROM drive. It will use VNC graphics, and it will auto-launch the graphical client.

- KVM

tux >virt-install --connect qemu:///system --virt-type kvm --name sled12 \ --memory 1024 --disk size=10 --cdrom /dev/cdrom --graphics vnc \ --os-variant sled12- Xen

tux >virt-install --connect xen:// --virt-type xen --name sled12 \ --memory 1024 --disk size=10 --cdrom /dev/cdrom --graphics vnc \ --os-variant sled12

7.3 Advanced Guest Installation Scenarios #Edit source

This section provides instructions for operations exceeding the scope of a normal installation, such as memory ballooning and installing add-on products.

7.3.1 Including Add-on Products in the Installation #Edit source

Some operating systems such as openSUSE Leap offer to include add-on products in the installation process. If the add-on product installation source is provided via SUSE Customer Center, no special VM Guest configuration is needed. If it is provided via CD/DVD or ISO image, it is necessary to provide the VM Guest installation system with both the standard installation medium image and the image of the add-on product.

If you are using the GUI-based installation, select in the last step of the wizard and add the add-on product ISO image via › . Specify the path to the image and set the to .

If you are installing from the command line, you need to set up the virtual

CD/DVD drives with the --disk parameter rather than with

--cdrom. The device that is specified first is used for

booting. The following example will install SUSE Linux Enterprise Server 15 together with SUSE

Enterprise Storage extension:

tux > virt-install --name sles15+storage --memory 2048 --disk size=10 \

--disk /path/to/SLE-15-SP2-Full-ARCH-GM-media1.iso-x86_64-GM-DVD1.iso,device=cdrom \

--disk /path/to/SUSE-Enterprise-Storage-VERSION-DVD-ARCH-Media1.iso,device=cdrom \

--graphics vnc --os-variant sles158 Basic VM Guest Management #Edit source

Most management tasks, such as starting or stopping a VM Guest, can either

be done using the graphical application Virtual Machine Manager or on the command line using

virsh. Connecting to the graphical console via VNC is only

possible from a graphical user interface.

Note: Managing VM Guests on a Remote VM Host Server

If started on a VM Host Server, the libvirt tools Virtual Machine Manager,

virsh, and virt-viewer can be used to

manage VM Guests on the host. However, it is also possible to manage

VM Guests on a remote VM Host Server. This requires configuring remote access for

libvirt on the host. For instructions, see

Chapter 9, Connecting and Authorizing.

To connect to such a remote host with Virtual Machine Manager, you need to set up a connection

as explained in Section 9.2.2, “Managing Connections with Virtual Machine Manager”. If

connecting to a remote host using virsh or

virt-viewer, you need to specify a connection URI with

the parameter -c (for example, virsh -c

qemu+tls://saturn.example.com/system or virsh -c

xen+ssh://). The form of connection URI depends on the connection

type and the hypervisor—see

Section 9.2, “Connecting to a VM Host Server” for details.

Examples in this chapter are all listed without a connection URI.

8.1 Listing VM Guests #Edit source

The VM Guest listing shows all VM Guests managed by libvirt on a

VM Host Server.

8.1.1 Listing VM Guests with Virtual Machine Manager #Edit source

The main window of the Virtual Machine Manager lists all VM Guests for each VM Host Server it is connected to. Each VM Guest entry contains the machine's name, its status (, , or ) displayed as an icon and literally, and a CPU usage bar.

8.1.2 Listing VM Guests with virsh #Edit source

Use the command virsh list to get a

list of VM Guests:

- List all running guests

tux >virsh list- List all running and inactive guests

tux >virsh list --all

For more information and further options, see virsh help

list or man 1 virsh.

8.2 Accessing the VM Guest via Console #Edit source

VM Guests can be accessed via a VNC connection (graphical console) or, if supported by the guest operating system, via a serial console.

8.2.1 Opening a Graphical Console #Edit source

Opening a graphical console to a VM Guest lets you interact with the machine like a physical host via a VNC connection. If accessing the VNC server requires authentication, you are prompted to enter a user name (if applicable) and a password.

When you click into the VNC console, the cursor is “grabbed” and cannot be used outside the console anymore. To release it, press Alt–Ctrl.

Tip: Seamless (Absolute) Cursor Movement

To prevent the console from grabbing the cursor and to enable seamless cursor movement, add a tablet input device to the VM Guest. See Section 12.5, “Input Devices” for more information.

Certain key combinations such as Ctrl–Alt–Del are

interpreted by the host system and are not passed to the VM Guest. To pass

such key combinations to a VM Guest, open the

menu from the VNC window and choose the desired key combination entry. The

menu is only available when using Virtual Machine Manager and

virt-viewer. With Virtual Machine Manager, you can alternatively use the

“sticky key” feature as explained in

Tip: Passing Key Combinations to Virtual Machines.

Note: Supported VNC Viewers

Principally all VNC viewers can connect to the console of a VM Guest.

However, if you are using SASL authentication and/or TLS/SSL connection to

access the guest, the options are limited. Common VNC viewers such as

tightvnc or tigervnc support neither

SASL authentication nor TLS/SSL. The only supported alternative to Virtual Machine Manager

and virt-viewer is Remmina (refer to

Book “Reference”, Chapter 4 “Remote Graphical Sessions with VNC”, Section 4.2 “Remmina: the Remote Desktop Client”).

8.2.1.1 Opening a Graphical Console with Virtual Machine Manager #Edit source

In the Virtual Machine Manager, right-click a VM Guest entry.

Choose from the pop-up menu.

8.2.1.2 Opening a Graphical Console with virt-viewer #Edit source

virt-viewer is a simple VNC viewer with added

functionality for displaying VM Guest consoles. For example, it can be

started in “wait” mode, where it waits for a VM Guest to

start before it connects. It also supports automatically reconnecting to a

VM Guest that is rebooted.

virt-viewer addresses VM Guests by name, by ID or by

UUID. Use virsh list --all to get this

data.

To connect to a guest that is running or paused, use either the ID, UUID, or name. VM Guests that are shut off do not have an ID—you can only connect to them by UUID or name.

- Connect to guest with the ID

8 tux >virt-viewer 8- Connect to the inactive guest named

sles12; the connection window will open once the guest starts tux >virt-viewer --wait sles12With the

--waitoption, the connection will be upheld even if the VM Guest is not running at the moment. When the guest starts, the viewer will be launched.

For more information, see virt-viewer

--help or man 1 virt-viewer.

Note: Password Input on Remote Connections with SSH

When using virt-viewer to open a connection to a

remote host via SSH, the SSH password needs to be entered twice. The

first time for authenticating with libvirt, the second time for

authenticating with the VNC server. The second password needs to be

provided on the command line where virt-viewer was started.

8.2.2 Opening a Serial Console #Edit source

Accessing the graphical console of a virtual machine requires a graphical

environment on the client accessing the VM Guest. As an alternative,

virtual machines managed with libvirt can also be accessed from the shell

via the serial console and virsh. To open a serial

console to a VM Guest named “sles12”, run the following

command:

tux > virsh console sles12

virsh console takes two optional flags:

--safe ensures exclusive access to the console,

--force disconnects any existing sessions before

connecting. Both features need to be supported by the guest operating

system.

Being able to connect to a VM Guest via serial console requires that the guest operating system supports serial console access and is properly supported. Refer to the guest operating system manual for more information.

Tip: Enabling Serial Console Access for SUSE Linux Enterprise and openSUSE Guests

Serial console access in SUSE Linux Enterprise and openSUSE is disabled by default. To enable it, proceed as follows:

- SLES 12 and Up/openSUSE

Launch the YaST Boot Loader module and switch to the tab. Add

console=ttyS0to the field .- SLES 11

Launch the YaST Boot Loader module and select the boot entry for which to activate serial console access. Choose and add

console=ttyS0to the field . Additionally, edit/etc/inittaband uncomment the line with the following content:#S0:12345:respawn:/sbin/agetty -L 9600 ttyS0 vt102

8.3 Changing a VM Guest's State: Start, Stop, Pause #Edit source

Starting, stopping or pausing a VM Guest can be done with either Virtual Machine Manager or

virsh. You can also configure a VM Guest to be

automatically started when booting the VM Host Server.

When shutting down a VM Guest, you may either shut it down gracefully, or force the shutdown. The latter is equivalent to pulling the power plug on a physical host and is only recommended if there are no alternatives. Forcing a shutdown may cause file system corruption and loss of data on the VM Guest.

Tip: Graceful Shutdown

To be able to perform a graceful shutdown, the VM Guest must be configured to support ACPI. If you have created the guest with the Virtual Machine Manager, ACPI should be available in the VM Guest.

Depending on the guest operating system, availability of ACPI may not be sufficient to perform a graceful shutdown. It is strongly recommended to test shutting down and rebooting a guest before using it in production. openSUSE or SUSE Linux Enterprise Desktop, for example, can require PolKit authorization for shutdown and reboot. Make sure this policy is turned off on all VM Guests.

If ACPI was enabled during a Windows XP/Windows Server 2003 guest installation, turning it on in the VM Guest configuration only is not sufficient. For more information, see:

Regardless of the VM Guest's configuration, a graceful shutdown is always possible from within the guest operating system.

8.3.1 Changing a VM Guest's State with Virtual Machine Manager #Edit source

Changing a VM Guest's state can be done either from Virtual Machine Manager's main window, or from a VNC window.

Procedure 8.1: State Change from the Virtual Machine Manager Window #

Right-click a VM Guest entry.

Choose , , or one of the from the pop-up menu.

Procedure 8.2: State change from the VNC Window #

Open a VNC Window as described in Section 8.2.1.1, “Opening a Graphical Console with Virtual Machine Manager”.

Choose , , or one of the options either from the toolbar or from the menu.

8.3.1.1 Automatically Starting a VM Guest #Edit source

You can automatically start a guest when the VM Host Server boots. This feature is not enabled by default and needs to be enabled for each VM Guest individually. There is no way to activate it globally.

Double-click the VM Guest entry in Virtual Machine Manager to open its console.

Choose › to open the VM Guest configuration window.

Choose and check .

Save the new configuration with .

8.3.2 Changing a VM Guest's State with virsh #Edit source

In the following examples, the state of a VM Guest named “sles12” is changed.

- Start

tux >virsh start sles12- Pause

tux >virsh suspend sles12- Resume (a Suspended VM Guest)

tux >virsh resume sles12- Reboot

tux >virsh reboot sles12- Graceful shutdown

tux >virsh shutdown sles12- Force shutdown

tux >virsh destroy sles12- Turn on automatic start

tux >virsh autostart sles12- Turn off automatic start

tux >virsh autostart --disable sles12

8.4 Saving and Restoring the State of a VM Guest #Edit source

Saving a VM Guest preserves the exact state of the guest’s memory. The operation is similar to hibernating a computer. A saved VM Guest can be quickly restored to its previously saved running condition.

When saved, the VM Guest is paused, its current memory state is saved to disk, and then the guest is stopped. The operation does not make a copy of any portion of the VM Guest’s virtual disk. The amount of time taken to save the virtual machine depends on the amount of memory allocated. When saved, a VM Guest’s memory is returned to the pool of memory available on the VM Host Server.

The restore operation loads a VM Guest’s previously saved memory state file and starts it. The guest is not booted but instead resumed at the point where it was previously saved. The operation is similar to coming out of hibernation.

The VM Guest is saved to a state file. Make sure there is enough space on the partition you are going to save to. For an estimation of the file size in megabytes to be expected, issue the following command on the guest:

tux > free -mh | awk '/^Mem:/ {print $3}'Warning: Always Restore Saved Guests

After using the save operation, do not boot or start the saved VM Guest. Doing so would cause the machine's virtual disk and the saved memory state to get out of synchronization. This can result in critical errors when restoring the guest.

To be able to work with a saved VM Guest again, use the restore operation.

If you used virsh to save a VM Guest, you cannot

restore it using Virtual Machine Manager. In this case, make sure to restore using

virsh.

Important: Only for VM Guests with Disk Types raw, qcow2

Saving and restoring VM Guests is only possible if the VM Guest is using

a virtual disk of the type raw

(.img), or qcow2.

8.4.1 Saving/Restoring with Virtual Machine Manager #Edit source

Procedure 8.3: Saving a VM Guest #

Open a VNC connection window to a VM Guest. Make sure the guest is running.

Choose › › .

Procedure 8.4: Restoring a VM Guest #

Open a VNC connection window to a VM Guest. Make sure the guest is not running.

Choose › .

If the VM Guest was previously saved using Virtual Machine Manager, you will not be offered an option to the guest. However, note the caveats on machines saved with

virshoutlined in Warning: Always Restore Saved Guests.

8.4.2 Saving and Restoring with virsh #Edit source

Save a running VM Guest with the command virsh

save and specify the file which it is saved to.

- Save the guest named

opensuse13 tux >virsh save opensuse13 /virtual/saves/opensuse13.vmsav- Save the guest with the ID

37 tux >virsh save 37 /virtual/saves/opensuse13.vmsave

To restore a VM Guest, use virsh

restore:

tux > virsh restore /virtual/saves/opensuse13.vmsave8.5 Creating and Managing Snapshots #Edit source

VM Guest snapshots are snapshots of the complete virtual machine including the state of CPU, RAM, devices, and the content of all writable disks. To use virtual machine snapshots, all the attached hard disks need to use the qcow2 disk image format, and at least one of them needs to be writable.

Snapshots let you restore the state of the machine at a particular point in time. This is useful when undoing a faulty configuration or the installation of a lot of packages. After starting a snapshot that was created while the VM Guest was shut off, you will need to boot it. Any changes written to the disk after that point in time will be lost when starting the snapshot.

Note

Snapshots are supported on KVM VM Host Servers only.

8.5.1 Terminology #Edit source

There are several specific terms used to describe the types of snapshots:

- Internal snapshots

Snapshots that are saved into the qcow2 file of the original VM Guest. The file holds both the saved state of the snapshot and the changes made since the snapshot was taken. The main advantage of internal snapshots is that they are all stored in one file and therefore it is easy to copy or move them across multiple machines.

- External snapshots

When creating an external snapshot, the original qcow2 file is saved and made read-only, while a new qcow2 file is created to hold the changes. The original file is sometimes called a 'backing' or 'base' file, while the new file with all the changes is called an 'overlay' or 'derived' file. External snapshots are useful when performing backups of VM Guests. However, external snapshots are not supported by Virtual Machine Manager, and cannot be deleted by

virshdirectly. For more information on external snapshots in QEMU, refer to Section 29.2.4, “Manipulate Disk Images Effectively”.- Live snapshots

Snapshots created when the original VM Guest is running. Internal live snapshots support saving the devices, and memory and disk states, while external live snapshots with

virshsupport saving either the memory state, or the disk state, or both.- Offline snapshots

Snapshots created from a VM Guest that is shut off. This ensures data integrity as all the guest's processes are stopped and no memory is in use.

8.5.2 Creating and Managing Snapshots with Virtual Machine Manager #Edit source

Important: Internal Snapshots Only

Virtual Machine Manager supports only internal snapshots, either live or offline.

To open the snapshot management view in Virtual Machine Manager, open the VNC window as described in Section 8.2.1.1, “Opening a Graphical Console with Virtual Machine Manager”. Now either choose › or click in the toolbar.

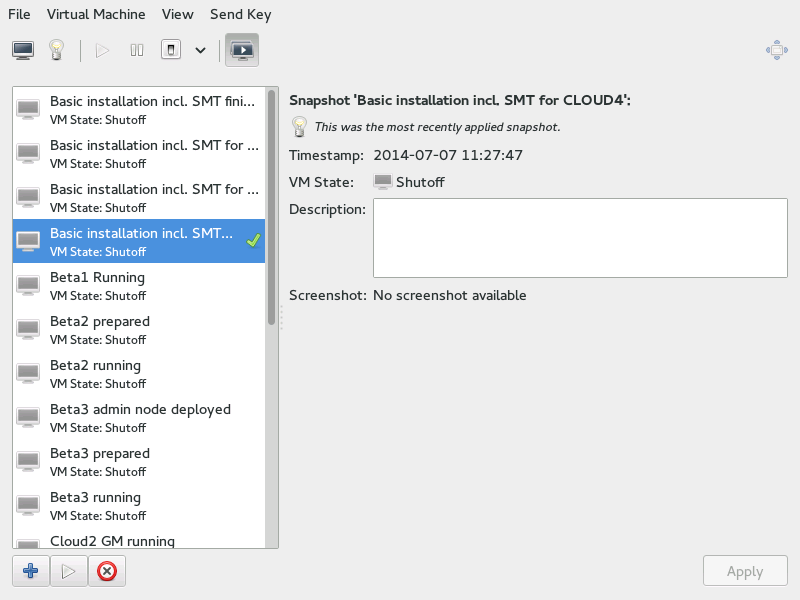

The list of existing snapshots for the chosen VM Guest is displayed in the left-hand part of the window. The snapshot that was last started is marked with a green tick. The right-hand part of the window shows details of the snapshot currently marked in the list. These details include the snapshot's title and time stamp, the state of the VM Guest at the time the snapshot was taken and a description. Snapshots of running guests also include a screenshot. The can be changed directly from this view. Other snapshot data cannot be changed.

8.5.2.1 Creating a Snapshot #Edit source

To take a new snapshot of a VM Guest, proceed as follows:

Optionally, shut down the VM Guest if you want to create an offline snapshot.

Click in the bottom left corner of the VNC window.

The window opens.

Provide a and, optionally, a description. The name cannot be changed after the snapshot has been taken. To be able to identify the snapshot later easily, use a “speaking name”.

Confirm with .

8.5.2.2 Deleting a Snapshot #Edit source

To delete a snapshot of a VM Guest, proceed as follows:

Click in the bottom left corner of the VNC window.

Confirm the deletion with .

8.5.2.3 Starting a Snapshot #Edit source

To start a snapshot, proceed as follows:

Click in the bottom left corner of the VNC window.

Confirm the start with .

8.5.3 Creating and Managing Snapshots with virsh #Edit source

To list all existing snapshots for a domain

(admin_server in the following), run the

snapshot-list command:

tux > virsh snapshot-list --domain sle-ha-node1

Name Creation Time State

------------------------------------------------------------

sleha_12_sp2_b2_two_node_cluster 2016-06-06 15:04:31 +0200 shutoff

sleha_12_sp2_b3_two_node_cluster 2016-07-04 14:01:41 +0200 shutoff

sleha_12_sp2_b4_two_node_cluster 2016-07-14 10:44:51 +0200 shutoff

sleha_12_sp2_rc3_two_node_cluster 2016-10-10 09:40:12 +0200 shutoff

sleha_12_sp2_gmc_two_node_cluster 2016-10-24 17:00:14 +0200 shutoff

sleha_12_sp3_gm_two_node_cluster 2017-08-02 12:19:37 +0200 shutoff

sleha_12_sp3_rc1_two_node_cluster 2017-06-13 13:34:19 +0200 shutoff

sleha_12_sp3_rc2_two_node_cluster 2017-06-30 11:51:24 +0200 shutoff

sleha_15_b6_two_node_cluster 2018-02-07 15:08:09 +0100 shutoff

sleha_15_rc1_one-node 2018-03-09 16:32:38 +0100 shutoff

The snapshot that was last started is shown with the

snapshot-current command:

tux > virsh snapshot-current --domain admin_server

Basic installation incl. SMT for CLOUD4

Details about a particular snapshot can be obtained by running the

snapshot-info command:

tux > virsh snapshot-info --domain admin_server \

-name "Basic installation incl. SMT for CLOUD4"

Name: Basic installation incl. SMT for CLOUD4

Domain: admin_server

Current: yes

State: shutoff

Location: internal

Parent: Basic installation incl. SMT for CLOUD3-HA

Children: 0

Descendants: 0

Metadata: yes8.5.3.1 Creating Internal Snapshots #Edit source

To take an internal snapshot of a VM Guest, either a live or offline, use

the snapshot-create-as command as follows:

tux > virsh snapshot-create-as --domain admin_server1 --name "Snapshot 1"2 \

--description "First snapshot"38.5.3.2 Creating External Snapshots #Edit source

With virsh, you can take external snapshots of the

guest's memory state, disk state, or both.

To take both live and offline external snapshots of the guest's disk,

specify the --disk-only option:

tux > virsh snapshot-create-as --domain admin_server --name \

"Offline external snapshot" --disk-only

You can specify the --diskspec option to control how the

external files are created:

tux > virsh snapshot-create-as --domain admin_server --name \

"Offline external snapshot" \

--disk-only --diskspec vda,snapshot=external,file=/path/to/snapshot_file

To take a live external snapshot of the guest's memory, specify the

--live and --memspec options:

tux > virsh snapshot-create-as --domain admin_server --name \

"Offline external snapshot" --live \

--memspec snapshot=external,file=/path/to/snapshot_file

To take a live external snapshot of both the guest's disk and memory

states, combine the --live, --diskspec,

and --memspec options:

tux > virsh snapshot-create-as --domain admin_server --name \

"Offline external snapshot" --live \

--memspec snapshot=external,file=/path/to/snapshot_file

--diskspec vda,snapshot=external,file=/path/to/snapshot_file

Refer to the SNAPSHOT COMMANDS section in

man 1 virsh for more details.

8.5.3.3 Deleting a Snapshot #Edit source

External snapshots cannot be deleted with virsh.

To delete an internal snapshot of a VM Guest and restore the disk space

it occupies, use the snapshot-delete command:

tux > virsh snapshot-delete --domain admin_server --snapshotname "Snapshot 2"8.5.3.4 Starting a Snapshot #Edit source

To start a snapshot, use the snapshot-revert command:

tux > virsh snapshot-revert --domain admin_server --snapshotname "Snapshot 1"