Security and Hardening Guide

- Preface

- 1 Security and confidentiality

- 2 Common Criteria

- I Authentication

- II Local security

- 10 Physical security

- 11 Software management

- 12 File management

- 13 Encrypting partitions and files

- 14 Storage encryption for hosted applications with cryptctl

- 15 User management

- 16 Restricting

cronandat - 17 Spectre/Meltdown checker

- 18 Configuring security settings with YaST

- 19 Authorization with PolKit

- 20 Access control lists in Linux

- 21 Intrusion detection with AIDE

- III Network security

- IV Confining privileges with AppArmor

- 29 Introducing AppArmor

- 30 Getting started

- 31 Immunizing programs

- 32 Profile components and syntax

- 33 AppArmor profile repositories

- 34 Building and managing profiles with YaST

- 35 Building profiles from the command line

- 36 Profiling your Web applications using ChangeHat

- 37 Confining users with

pam_apparmor - 38 Managing profiled applications

- 39 Support

- 40 AppArmor glossary

- V SELinux

- VI The Linux Audit Framework

- A GNU licenses

6 LDAP with 389 Directory Server #Edit source

Abstract#

The Lightweight Directory Access Protocol (LDAP) is a protocol designed to access and maintain information directories. LDAP can be used for tasks such as user and group management, system configuration management, and address management. In openSUSE Leap 15.3 the LDAP service is provided by the 389 Directory Server, replacing OpenLDAP.

- 6.1 Structure of an LDAP directory tree

- 6.2 Installing 389 Directory Server

- 6.3 Firewall configuration

- 6.4 Backing up and restoring 389 Directory Server

- 6.5 Managing LDAP users and groups

- 6.6 Using SSSD to manage LDAP authentication

- 6.7 Managing modules

- 6.8 Migrating to 389 Directory Server from OpenLDAP

- 6.9 Importing TLS server certificates and keys

- 6.10 Setting up replication

- 6.11 Synchronizing with Microsoft Active Directory

- 6.12 More information

Ideally, a central server stores the data in a directory and distributes it to all clients using a well-defined protocol. The structured data allow a wide range of applications to access them. A central repository reduces the necessary administrative effort. The use of an open and standardized protocol such as LDAP ensures that as many client applications as possible can access such information.

A directory in this context is a type of database optimized for quick and effective reading and searching. The type of data stored in a directory tends to be long lived and changes infrequently. This allows the LDAP service to be optimized for high performance concurrent reads, whereas conventional databases are optimized for accepting many writes to data in a short time.

6.1 Structure of an LDAP directory tree #Edit source

This section introduces the layout of an LDAP directory tree, and provides the basic terminology used with regard to LDAP. If you are familiar with LDAP, read on at Section 6.2.1, “Setting up a new 389 Directory Server instance”.

An LDAP directory has a tree structure. All entries (called objects) of the directory have a defined position within this hierarchy. This hierarchy is called the directory information tree (DIT). The complete path to the desired entry, which unambiguously identifies it, is called the distinguished name or DN. An object in the tree is identified by its relative distinguished name (RDN). The distinguished name is built from the RDNs of all entries on the path to the entry.

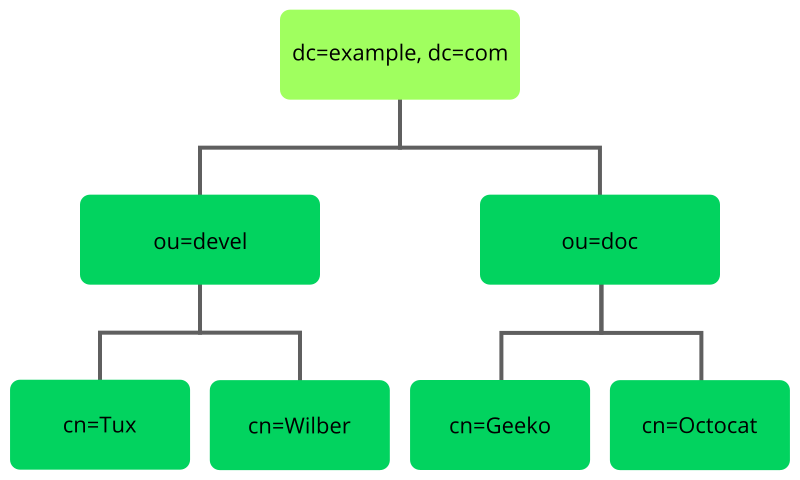

The relations within an LDAP directory tree become more evident in the following example, shown in Figure 6.1, “Structure of an LDAP directory”.

Figure 6.1: Structure of an LDAP directory #

The complete diagram is a fictional directory information tree. The

entries on three levels are depicted. Each entry corresponds to one box

in the image. The complete, valid distinguished name

for the fictional employee Geeko

Linux, in this case, is cn=Geeko

Linux,ou=doc,dc=example,dc=com. It is composed by adding the

RDN cn=Geeko Linux to the DN of the preceding entry

ou=doc,dc=example,dc=com.

The types of objects that can be stored in the DIT are globally determined following a Schema. The type of an object is determined by the object class. The object class determines what attributes the relevant object must or may be assigned. The Schema contains all object classes and attributes which can be used by the LDAP server. Attributes are a structured data type. Their syntax, ordering and other behavior is defined by the Schema. LDAP servers supply a core set of Schemas which can work in a broad variety of environments. If a custom Schema is required, you can upload it to an LDAP server.

Table 6.1, “Commonly used object classes and attributes” offers a small overview of the object

classes from 00core.ldif and

06inetorgperson.ldif used in the example, including

required attributes (Req. Attr.) and valid attribute values. After installing

389 Directory Server, these can be found in

/usr/share/dirsrv/schema.

Table 6.1: Commonly used object classes and attributes #

|

Object Class |

Meaning |

Example Entry |

Req. Attr. |

|---|---|---|---|

|

|

name components of the domain |

example |

displayName |

|

|

organizational unit |

|

|

|

|

person-related data for the intranet or Internet |

|

|

Example 6.1, “Excerpt from CN=schema” shows an excerpt from a Schema directive with explanations.

Example 6.1: Excerpt from CN=schema #

attributetype (1.2.840.113556.1.2.102 NAME 'memberOf' 1 DESC 'Group that the entry belongs to' 2 SYNTAX 1.3.6.1.4.1.1466.115.121.1.12 3 X-ORIGIN 'Netscape Delegated Administrator') 4 objectclass (2.16.840.1.113730.3.2.333 NAME 'nsPerson' 5 DESC 'A representation of a person in a directory server' 6 SUP top STRUCTURAL 7 MUST ( displayName $ cn ) 8 MAY ( userPassword $ seeAlso $ description $ legalName $ mail \ $ preferredLanguage ) 9 X-ORIGIN '389 Directory Server Project' ...

The name of the attribute, its unique object identifier (OID, numerical), and the abbreviation of the attribute. | |

A brief description of the attribute with | |

The type of data that can be held in the attribute. In this case, it is a case-insensitive directory string. | |

The source of the schema element (for example, the name of the project). | |

The definition of the object class | |

A brief description of the object class. | |

The | |

With | |

With |

6.2 Installing 389 Directory Server #Edit source

Install 389 Directory Server with the following command:

>sudozypper install 389-ds

After installation, set up the server as described in Section 6.2.1, “Setting up a new 389 Directory Server instance”.

6.2.1 Setting up a new 389 Directory Server instance #Edit source

You will use the dscreate command to create new 389 Directory Server

instances, and the dsctl command to cleanly remove them.

There are two ways to configure and create a new instance: from a custom configuration file, and from an auto-generated template file. You can use the auto-generated template without changes for a test instance, though for a production system you must carefully review it and make any necessary changes.

Then you will set up administration credentials, manage users and groups, and configure identity services.

The 389 Directory Server is controlled by three primary commands:

dsctlManages a local instance and requires

rootpermissions. Requires you to be connected to a terminal which is running the directory server instance. Used for starting, stopping, backing up the database, and more.dsconfThe primary tool used for administration and configuration of the server. Manages an instance's configuration via its external interfaces. This allows you to make configuration changes remotely on the instance.

dsidmUsed for identity management (managing users, groups, passwords, etc.). The permissions are granted by access controls, so, for example, users can reset their own password or change details of their own account.

Follow these steps to set up a simple instance for testing and development, populated with a small set of sample entries.

6.2.2 Creating a 389 Directory Server instance with a custom configuration file #Edit source

You can create a new 389 Directory Server instance from a simple custom configuration file. This file must be in the INF format, and you can name it anything you like.

The default instance name is localhost. The instance name

cannot be changed after it has been created. It is better to create your own

instance name, rather than using the default, to avoid confusion and to

enable a better understanding of how it all works. The following examples

use the LDAP1 instance name, and a suffix of

dc=LDAP1,dc=COM.

Example 6.2 shows an example configuration file that you can use to create a new 389 Directory Server instance. You can copy and use this file without changes.

Copy the following example file,

LDAP1.inf, to your home directory:Example 6.2: Minimal 389 Directory Server instance configuration file #

# LDAP1.inf [general] config_version = 2 1 [slapd] root_password = PASSWORD2 self_sign_cert = True 3 instance_name = LDAP1 [backend-userroot] sample_entries = yes 4 suffix = dc=LDAP1,dc=COM

This line is required, indicating that this is a version 2 setup INF file.

Create a strong

root_passwordfor the ldap usercn=Directory Manager. This user is for connecting (binding) to the directory.Create self-signed server certificates in

/etc/dirsrv/slapd-LDAP1.Populate the new instance with sample user and group entries.

To create the 389 Directory Server instance from Example 6.2, run the following command:

>sudodscreate -v from-file LDAP1.inf |\tee LDAP1-OUTPUT.txtThis shows all activity during the instance creation, stores all the messages in

LDAP1-OUTPUT.txt, and creates a working LDAP server in about a minute. The verbose output contains a lot of useful information. If you do not want to save it, then delete the| tee LDAP1-OUTPUT.txtportion of the command.If the

dscreatecommand should fail, the messages will tell you why. After correcting any issues, remove the instance (see Step 5) and create a new instance.A successful installation reports "

Completed installation for LDAP1". Check the status of your new server:>sudodsctl LDAP1 statusInstance "LDAP1" is runningThe following commands are for cleanly removing the instance. The first command performs a dry run and does not remove the instance. When you are sure you want to remove it, use the second command with the

--do-itoption:>sudodsctl LDAP1 removeNot removing: if you are sure, add --do-it>sudodsctlLDAP1 remove --do-itThis command also removes partially installed or corrupted instances. You can reliably create and remove instances as often as you want.

If you forget the name of your instance, use dsctl to

list all instances:

>dsctl -lslapd-LDAP1

6.2.3 Creating a 389 Directory Server instance from a template #Edit source

You can auto-create a template for a new 389 Directory Server instance with the

dscreate command. This creates a template that you can

use without making any changes, for testing. For production systems, review

and change it to suit your own requirements. All of the defaults are

documented in the template file, and commented out. To make changes,

uncomment the default and enter your own value. All options are well

documented.

The following example prints the template to stdout:

>dscreate create-template

This is good for a quick review of the template, but you must create a file to use in creating your new 389 Directory Server instance. You can name this file anything you want:

>dscreate create-template TEMPLATE.txt

This is a snippet from the new file:

# full_machine_name (str) # Description: Sets the fully qualified hostname (FQDN) of this system. When # installing this instance with GSSAPI authentication behind a load balancer, set # this parameter to the FQDN of the load balancer and, additionally, set # "strict_host_checking" to "false". # Default value: ldapserver1.test.net ;full_machine_name = ldapserver1.test.net # selinux (bool) # Description: Enables SELinux detection and integration during the installation # of this instance. If set to "True", dscreate auto-detects whether SELinux is # enabled. Set this parameter only to "False" in a development environment. # Default value: True ;selinux = True

It automatically configures some options from your existing environment, for

example, the system's fully-qualified domain name, which is called

full_machine_name in the template. Use this file with no

changes to create a new instance:

>sudodscreate from-file TEMPLATE.txt

This creates a new instance named localhost, and

automatically starts it after creation:

>sudodsctl localhost statusInstance "localhost" is running

The default values create a fully operational instance, but there are some values you might want to change.

The instance name cannot be changed after it has been created. It is better

to create your own instance name, rather than using the default, to avoid

confusion and to enable a better understanding of how it all works. To do

this, uncomment the ;instance_name = localhost line and

change localhost to your chosen name. In the following

examples, the instance name is LDAP1.

Another useful change is to populate your new instance with sample users and

groups. Uncomment ;sample_entries = no and change

no to yes. This creates the

demo_user and demo_group.

Set your own password by uncommenting ;root_password, and

replacing the default password with your own.

The template does not create a default suffix, so you should configure your

own on the suffix line, like the following example:

suffix = dc=LDAP1,dc=COM

You can cleanly remove any instance and start over with

dsctl:

>sudodsctl LDAP1 remove --do-it

6.2.4 Stopping and starting 389 Directory Server #Edit source

The following examples use LDAP1 as the instance name.

Use systemd to manage your 389 Directory Server instance. Get the status of your

instance:

>systemctl status --no-pager --full dirsrv@LDAP1.service● dirsrv@LDAP1.service - 389 Directory Server LDAP1. Loaded: loaded (/usr/lib/systemd/system/dirsrv@.service; enabled; vendor preset: disabled) Active: active (running) since Thu 2021-03-11 08:55:28 PST; 2h 7min ago Process: 4451 ExecStartPre=/usr/lib/dirsrv/ds_systemd_ask_password_acl /etc/dirsrv/slapd-LDAP1/dse.ldif (code=exited, status=0/SUCCESS) Main PID: 4456 (ns-slapd) Status: "slapd started: Ready to process requests" Tasks: 26 CGroup: /system.slice/system-dirsrv.slice/dirsrv@LDAP1.service └─4456 /usr/sbin/ns-slapd -D /etc/dirsrv/slapd-LDAP1 -i /run/dirsrv/slapd-LDAP1.pid

Start, stop, and restart your LDAP server:

>sudosystemctl start dirsrv@LDAP1.service>sudosystemctl stop dirsrv@LDAP1.service>sudosystemctl restart dirsrv@LDAP1.service

See Book “Reference”, Chapter 10 “The systemd daemon” for more information on using

systemctl.

The dsctl command also starts and stops your server:

>sudodsctl LDAP1 status>sudodsctl LDAP1 stop>sudodsctl LDAP1 restart>sudodsctl LDAP1 start

6.2.5 Configuring admin credentials for local administration #Edit source

For local administration of the 389 Directory Server, you can create a

.dsrc configuration file in the

/root directory, allowing root and sudo users to

administer the server without typing connection details with every command.

Example 6.3

shows an example for local administration on the server, using

LDAP1 and com for the

suffix.

After creating your /root/.dsrc file, try a few

administration commands, such as creating new users (see

Section 6.5, “Managing LDAP users and groups”).

Example 6.3: A .dsrc file for local administration #

# /root/.dsrc file for administering the LDAP1 instance [LDAP1] 1 uri = ldapi://%%2fvar%%2frun%%2fslapd-LDAP1.socket 2 basedn = dc=LDAP1,dc=COM binddn = cn=Directory Manager

This must specify your exact instance name. | |

In the URI, the slashes are replaced with |

Important: New negation feature in sudoers.ldap

In sudo versions older than 1.9.9,

negation in sudoers.ldap does

not work for the sudoUser,

sudoRunAsUser, or

sudoRunAsGroup attributes. For example:

# does not match all but joe # instead, it does not match anyone sudoUser: !joe # does not match all but joe # instead, it matches everyone including Joe sudoUser: ALL sudoUser: !joe

In sudo version 1.9.9 and higher, negation is

enabled for the sudoUser attribute. See

man 5 sudoers.ldap for more information.

6.3 Firewall configuration #Edit source

The default TCP ports for 389 Directory Server are 389 and 636. TCP 389 is for unencrypted connections, and STARTTLS. 636 is for encrypted connections over TLS.

firewalld is the default firewall manager for SUSE Linux Enterprise. The following rules

activate the ldap and ldaps firewall

services:

>sudofirewall-cmd --add-service=ldap --zone=internal>sudofirewall-cmd --add-service=ldaps --zone=internal>sudofirewall-cmd --runtime-to-permanent

Replace the zone with the appropriate zone for your server. See

Section 6.9, “Importing TLS server certificates and keys” for information on securing

your connections with TLS, and

Section 24.3, “Firewalling basics” to learn about firewalld.

6.4 Backing up and restoring 389 Directory Server #Edit source

389 Directory Server supports making offline and online backups. The

dsctl command makes offline database backups, and the

dsconf command makes online database backups. Back up the

LDAP server configuration directory, to enable complete restoration in case

of a major failure.

6.4.1 Backing up the LDAP server configuration #Edit source

Your LDAP server configuration is in the directory

/etc/dirsrv/slapd-INSTANCE_NAME.

This directory contains certificates, keys, and the dse.ldif

file. Make a compressed backup of this directory with the

tar command:

>sudotar caf \config_slapd-INSTANCE_NAME-$(date +%Y-%m-%d_%H-%M-%S).tar.gz \/etc/dirsrv/slapd-INSTANCE_NAME/

When running tar, you may see the harmless informational

message tar: Removing leading `/' from member names.

To restore a previous configuration, unpack it to the same directory:

(Optional) To avoid overwriting an existing configuration, move it:

>sudoold /etc/dirsrv/slapd-INSTANCE_NAME/Unpack the backup archive:

>sudotar -xvzf\config_slapd-INSTANCE_NAME-DATE.tar.gzCopy it to

/etc/dirsrv/slapd-INSTANCE_NAME:>sudocp -r etc/dirsrv/slapd-INSTANCE_NAME\/etc/dirsrv/slapd-INSTANCE_NAME

6.4.2 Creating an offline backup of the LDAP database and restoring from it #Edit source

The dsctl command makes offline backups. Stop the server:

>sudodsctl INSTANCE_NAME stopInstance "INSTANCE_NAME" has been stopped

Then make the backup using your instance name. The following example creates a backup archive at /var/lib/dirsrv/slapd-INSTANCE_NAME/bak/INSTANCE_NAME-DATE:

>sudodsctl INSTANCE_NAME db2bakdb2bak successful

For example, on a test instance named ldap1 it looks like this:

/var/lib/dirsrv/slapd-ldap1/bak/ldap1-2021_10_25_13_03_17Restore from this backup, naming the directory containing the backup archive:

>sudodsctl INSTANCE_NAME bak2db\/var/lib/dirsrv/slapd-INSTANCE_NAME/bak/INSTANCE_NAME-DATE/bak2db successful

Then start the server:

>sudodsctl INSTANCE_NAME startInstance "INSTANCE_NAME" has been started

You can also create LDIF backups:

>sudodsctl INSTANCE_NAME db2ldif --replication userRootldiffile: /var/lib/dirsrv/slapd-INSTANCE_NAME/ldif/INSTANCE_NAME-userRoot-DATE.ldif db2ldif successful

Restore an LDIF backup with the name of the archive, then start the server:

>sudodsctl ldif2db userRoot\/var/lib/dirsrv/slapd-INSTANCE_NAME/ldif/INSTANCE_NAME-userRoot-DATE.ldif>sudodsctl INSTANCE_NAME start

6.4.3 Creating an online backup of the LDAP database and restoring from it #Edit source

Use the dsconf to make an online backup of your LDAP

database:

>sudodsconf INSTANCE_NAME backup createThe backup create task has finished successfully

This creates

/var/lib/dirsrv/slapd-INSTANCE_NAME/bak/INSTANCE_NAME-DATE.

Restore it:

>sudodsconf INSTANCE_NAME backup restore\/var/lib/dirsrv/slapd-INSTANCE_NAME/bak/INSTANCE_NAME-DATE

6.5 Managing LDAP users and groups #Edit source

Use the dsidm command to create, remove, and manage

users and groups.

6.5.1 Querying existing LDAP users and groups #Edit source

The following examples show how to list your existing users and groups. The examples use the instance name LDAP1. Replace this with your instance name:

>sudodsidm LDAP1 user list>sudodsidm LDAP1 group list

List all information on a single user:

>sudodsidm LDAP1 user get USER

List all information on a single group:

>sudodsidm LDAP1 group get GROUP

List members of a group:

>sudodsidm LDAP1 group members GROUP

6.5.2 Creating users and managing passwords #Edit source

In the following example we create one user, wilber. The

example server instance is named LDAP1, and the

instance's suffix is dc=LDAP1,dc=COM.

Procedure 6.1: Creating LDAP users #

The following example creates the user Wilber Fox on your 389 DS instance:

>sudodsidm LDAP1 user create --uid wilber \--cn wilber --displayName 'Wilber Fox' --uidNumber 1001 --gidNumber 101 \--homeDirectory /home/wilberVerify by looking up your new user's

distinguished name(fully qualified name to the directory object, which is guaranteed unique):>sudodsidm LDAP1 user get wilberdn: uid=wilber,ou=people,dc=LDAP1,dc=COM [...]You need the distinguished name for actions such as changing the password for a user.

Create a password for new user

wilber:>sudodsidm LDAP1 account reset_password \uid=wilber,ou=people,dc=LDAP1,dc=COMEnter the new password for

wilbertwice.If the action was successful, you get the following message:

reset password for uid=wilber,ou=people,dc=LDAP1,dc=COM

Use the same command to change an existing password.

Verify that the user's password works:

>ldapwhoami -D uid=wilber,ou=people,dc=LDAP1,dc=COM -WEnter LDAP Password: PASSWORD dn: uid=wilber,ou=people,dc=LDAP1,dc=COM

6.5.3 Creating and managing groups #Edit source

After creating users, you can create groups, and then assign

users to them. In the following examples, we create a group,

server_admins, and assign the user

wilber to this group. The example server instance is named

LDAP1, and the instance's suffix is

dc=LDAP1,dc=COM.

Procedure 6.2: Creating LDAP groups and assigning users to them #

Create the group:

>sudodsidm LDAP1 group createYou will be prompted for a group name. Enter your chosen group name, which in the following example is SERVER_ADMINS:

Enter value for cn : SERVER_ADMINS

Add the user

wilber(created in Procedure 6.1, “Creating LDAP users”) to the group:>sudodsidm LDAP1 group add_member SERVER_ADMINS\uid=wilber,ou=people,dc=LDAP1,dc=COMadded member: uid=wilber,ou=people,dc=LDAP1,dc=COM

6.5.4 Deleting users, groups, and removing users from groups #Edit source

Use the dsidm command to delete users, remove users

from groups, and delete groups. The following example removes our

example user wilber from the server_admins group:

>sudodsidm LDAP1 group remove_member SERVER_ADMINS\uid=wilber,ou=people,dc=LDAP1,dc=COM

Delete a user:

>sudodsidm LDAP1 user delete\wilber,ou=people,dc=LDAP1,dc=COM

Delete a group:

>sudodsidm LDAP1 group delete SERVER_ADMINS

6.6 Using SSSD to manage LDAP authentication #Edit source

The System Security Services Daemon (SSSD) manages authentication, identification, and access controls for remote users. This section describes how to use SSSD to manage authentication and identification for your 389 Directory Server.

SSSD mediates between your LDAP server and clients. It supports several provider back-ends, such as LDAP, Active Directory, and Kerberos. SSSD supports services, including SSH, PAM, NSS, and sudo. SSSD provides performance benefits and resilience through caching user IDs and credentials. Caching reduces the number of requests to your 389 DS server, and provides authentication and identity services when the back-ends are unavailable.

If the Name Services Caching Daemon (ncsd) is running on your network, you should disable or remove it. ncsd caches only the common name service requests, such as passwd, group, hosts, service, and netgroup, and will conflict with SSSD.

Your LDAP server is the provider, and your SSSD instance is the client of the provider. You may install SSSD on your 389 DS server, but installing it on a separate machine provides some resilience in case the 389 DS server becomes unavailable. Use the following procedure to install and configure an SSSD client. The example 389 DS instance name is LDAP1:

Install the

sssdandsssd-ldappackages:>sudozypper in sssd sssd-ldapBack up the

/etc/sssd/sssd.conffile, if it exists:>sudoold /etc/sssd/sssd.confCreate your new SSSD configuration template. The allowed output file names are

sssd.confandldap.conf.displaysends the output to stdout. The following example creates a client configuration in/etc/sssd/sssd.conf:>sudosudo cd /etc/sssd>sudodsidm LDAP1 client_config sssd.confReview the output and make any necessary changes to suit your environment. The following

/etc/sssd/sssd.conffile demonstrates a working example:[sssd] services = nss, pam, ssh, sudo config_file_version = 2 domains = default [nss] homedir_substring = /home [domain/default] # If you have large groups (for example, 50+ members), # you should set this to True ignore_group_members = False debug_level=3 cache_credentials = True id_provider = ldap auth_provider = ldap access_provider = ldap chpass_provider = ldap ldap_schema = rfc2307bis ldap_search_base = dc=example,dc=com # We strongly recommend ldaps ldap_uri = ldaps://ldap.example.com ldap_tls_reqcert = demand ldap_tls_cacert = /etc/openldap/ldap.crt ldap_access_filter = (|(memberof=cn=<login group>,ou=Groups,dc=example,dc=com)) enumerate = false access_provider = ldap ldap_user_member_of = memberof ldap_user_gecos = cn ldap_user_uuid = nsUniqueId ldap_group_uuid = nsUniqueId ldap_account_expire_policy = rhds ldap_access_order = filter, expire # add these lines to /etc/ssh/sshd_config # AuthorizedKeysCommand /usr/bin/sss_ssh_authorizedkeys # AuthorizedKeysCommandUser nobody ldap_user_ssh_public_key = nsSshPublicKey

Set file ownership to root, and restrict read-write permissions to root:

>sudochown root:root /etc/sssd/sssd.conf>sudochmod 600 /etc/sssd/sssd.confEdit the

/etc/nsswitch.confconfiguration file on the SSSD server to include the following lines:passwd: compat sss group: compat sss shadow: compat sss

Edit the PAM configuration on the SSSD server, modifying

common-account-pc,common-auth-pc,common-password-pc, andcommon-session-pc. SUSE Linux Enterprise provides a command to modify all of these files at once,pam-config:>sudopam-config -a --sssVerify the modified configuration:

>sudopam-config -q --sssauth: account: password: session:Copy

/etc/dirsrv/slapd-LDAP1/ca.crtfrom the 389 DS server to/etc/openldap/certson your SSSD server, then rehash it:>sudoc_rehash /etc/openldap/certsEnable and start SSSD:

>sudosystemctl enable --now sssd

See Chapter 5, Setting up authentication clients using YaST for information on managing the sssd.service with systemctl.

6.7 Managing modules #Edit source

Use the following command to list all available modules, enabled and disabled. Use your server's hostname rather than the instance name of your 389 Directory Server, like the following example hostname of LDAPSERVER1:

>sudodsconf -D "cn=Directory Manager" ldap://LDAPSERVER1 plugin listEnter password for cn=Directory Manager on ldap://LDAPSERVER1: PASSWORD 7-bit check Account Policy Plugin Account Usability Plugin ACL Plugin ACL preoperation [...]

The following command enables the MemberOf plugin referenced in Section 6.6, “Using SSSD to manage LDAP authentication”:

>sudodsconf -D "cn=Directory Manager" ldap://LDAPSERVER1 plugin memberof enable

Note that the plugin names used in commands are lowercase, so they are different from how they appear when you list them. If you make a mistake with a plugin name, you will see a helpful error message:

dsconf instance plugin: error: invalid choice: 'MemberOf' (choose from 'memberof', 'automember', 'referential-integrity', 'root-dn', 'usn', 'account-policy', 'attr-uniq', 'dna', 'linked-attr', 'managed-entries', 'pass-through-auth', 'retro-changelog', 'posix-winsync', 'contentsync', 'list', 'show', 'set')

After enabling a plugin, it is necessary to restart the server:

>sudosystemctl restart dirsrv@LDAPSERVER1.service

To avoid having to restart the server, set the

nsslapd-dynamic-plugins parameter to

on:

>sudodsconf -D "cn=Directory Manager" ldap://LDAPSERVER1 config replace \nsslapd-dynamic-plugins=onEnter password for cn=Directory Manager on ldap://LDAPSERVER1: PASSWORD Successfully replaced "nsslapd-dynamic-plugins"

6.8 Migrating to 389 Directory Server from OpenLDAP #Edit source

OpenLDAP is deprecated.

It has been replaced by 389 Directory Server. SUSE provides the

openldap_to_ds utility to assist with migration, included

in the 389-ds package.

The openldap_to_ds utility is designed to automate as

much of the migration as possible. However, every LDAP deployment is

different, and it is not possible to write a tool that satisfies all

situations. It is likely there will be some manual steps to perform, and

you should test your migration procedure thoroughly before attempting a

production migration.

6.8.1 Testing migration from OpenLDAP #Edit source

There are enough differences between OpenLDAP and 389 Directory Server that migration will probably involve repeated testing and adjustments. It can be helpful to do a quick migration test to get an idea of what steps will be necessary for a successful migration.

Prerequisites:

A running 389 Directory Server instance.

An OpenLDAP

slapdconfiguration file or directory in dynamic ldif format.An ldif file backup of your OpenLDAP database.

If your slapd configuration is not in dynamic ldif format, create a

dynamic copy with slaptest. Create a

slapd.d directory, for example

/root/slapd.d/, then run the following command:

>sudoslaptest -f /etc/openldap/slapd.conf -F /root/slapd.d

This results in several files similar to the following example:

>sudols /root/slapd.d/*/root/slapd.d/cn=config.ldif /root/slapd.d/cn=config: cn=module{0}.ldif cn=schema.ldif olcDatabase={0}config.ldif cn=schema olcDatabase={-1}frontend.ldif olcDatabase={1}mdb.ldif

Create one ldif file per suffix. In the following examples, the suffix

is

dc=LDAP1,dc=COM. If you are using the

/etc/openldap/slapd.conf

format, use the following command to create the ldif backup file:

>sudoslapcat -f /etc/openldap/slapd.conf -b dc=LDAP1,dc=COM\-l /root/LDAP1-COM.ldif

Use openldap_to_ds to analyze the configuration and

files, and show a migration plan without changing anything:

>sudoopenldap_to_ds LDAP1\/root/slapd.d /root/LDAP1-COM.ldif.ldif

This performs a dry run and does not change anything. The output looks like this:

Examining OpenLDAP Configuration ...

Completed OpenLDAP Configuration Parsing.

Examining Ldifs ...

Completed Ldif Metadata Parsing.

The following migration steps will be performed:

* Schema Skip Unsupported Attribute -> otherMailbox (0.9.2342.19200300.100.1.22)

* Schema Skip Unsupported Attribute -> dSAQuality (0.9.2342.19200300.100.1.49)

* Schema Skip Unsupported Attribute -> singleLevelQuality (0.9.2342.19200300.100.1.50)

* Schema Skip Unsupported Attribute -> subtreeMinimumQuality (0.9.2342.19200300.100.1.51)

* Schema Skip Unsupported Attribute -> subtreeMaximumQuality (0.9.2342.19200300.100.1.52)

* Schema Create Attribute -> suseDefaultBase (SUSE.YaST.ModuleConfig.Attr:2)

* Schema Create Attribute -> suseNextUniqueId (SUSE.YaST.ModuleConfig.Attr:3)

[...]

* Schema Create ObjectClass -> suseDhcpConfiguration (SUSE.YaST.ModuleConfig.OC:10)

* Schema Create ObjectClass -> suseMailConfiguration (SUSE.YaST.ModuleConfig.OC:11)

* Database Reindex -> dc=example,dc=com

* Database Import Ldif -> dc=example,dc=com from example.ldif -

excluding entry attributes = [{'structuralobjectclass', 'entrycsn'}]

No actions taken. To apply migration plan, use '--confirm'The following example performs the migration, and the output looks different from the dry run:

>sudoopenldap_to_ds LDAP1 /root/slapd.d /root/LDAP1-COM.ldif --confirmStarting Migration ... This may take some time ... migration: 1 / 40 complete ... migration: 2 / 40 complete ... migration: 3 / 40 complete ... [...] Index task index_all_05252021_120216 completed successfully post: 39 / 40 complete ... post: 40 / 40 complete ... 🎉 Migration complete! ---------------------- You should now review your instance configuration and data: * [ ] - Create/Migrate Database Access Controls (ACI) * [ ] - Enable and Verify TLS (LDAPS) Operation * [ ] - Schedule Automatic Backups * [ ] - Verify Accounts Can Bind Correctly * [ ] - Review Schema Inconistent ObjectClass -> pilotOrganization (0.9.2342.19200300.100.4.20) * [ ] - Review Database Imported Content is Correct -> dc=ldap1,dc=com

When the migration is complete, openldap_to_ds

creates a checklist of post-migration tasks that must be completed.

It is a best practice to document all of your post-migration steps,

so that you can reproduce them in your post-production procedures.

Then test clients and application integrations to the migrated 389 Directory Server

instance.

Important: Develop a rollback plan

It is essential to develop a rollback plan in case of any failures. This plan should define a successful migration, the tests to determine what worked and what needs to be fixed, which steps are critical, what can be deferred until later, how to decide when to undo any changes, how to undo them with minimal disruption, and which other teams need to be involved.

Due to the variability of deployments, it is difficult to provide a recipe for a successful production migration. Once you have thoroughly tested the migration process and verified that you will get good results, there are some general steps that will help:

Lower all hostname/DNS TTLs to 5 minutes 48 hours before the change, to allow a fast rollback to your existing OpenLDAP deployment.

Pause all data synchronization and incoming data processes, so that data in the OpenLDAP environment does not change during the migration process.

Have all 389 Directory Server hosts ready for deployment before the migration.

Have your test migration documentation readily available.

6.8.2 Planning your migration #Edit source

As OpenLDAP is a “box of parts” and highly customizable, it is not possible to prescribe a “one size fits all” migration. It is necessary to assess your current environment and configuration with OpenLDAP and other integrations. This includes, and is not limited to:

Replication topology

High availability and load balancer configurations

External data flows (IGA, HR, AD, etc.)

Configured overlays (plug-ins in 389 Directory Server)

Client configuration and expected server features

Customized schema

TLS configuration

Plan what your 389 Directory Server deployment will look like in the end. This includes the same list, except replace overlays with plugins. Once you have assessed your current environment, and planned what your 389 Directory Server environment will look like, you can then form a migration plan. We recommended to build the 389 Directory Server environment in parallel to your OpenLDAP environment to allow switching between them.

Migrating from OpenLDAP to 389 Directory Server is a one-way migration. There are enough differences between the two that they cannot interoperate, and there is not a migration path from 389 Directory Server to OpenLDAP. The following table highlights the major similarities and differences.

| Feature | OpenLDAP | 389 Directory Server | Compatible |

|---|---|---|---|

| Two-way replication | SyncREPL | 389 DS-specific system | No |

| MemberOf | Overlay | Plug-in | Yes, simple configurations only |

| External Auth | Proxy | - | No |

| Active Directory Synchronization | - | Winsync Plug-in | No |

| Inbuilt Schema | OLDAP Schemas | 389 Schemas | Yes, supported by migration tool |

| Custom Schema | OLDAP Schemas | 389 Schemas | Yes, supported by migration tool |

| Database Import | LDIF | LDIF | Yes, supported by migration tool |

| Password hashes | Varies | Varies | Yes, all formats supported excluding Argon2 |

| OpenLDAP to 389 DS replication | - | - | No mechanism to replicate to 389 DS is possible |

| Time-based one-time password (TOTP) | TOTP overlay | - | No, currently not supported |

| entryUUID | Part of OpenLDAP | Plug-in | Yes |

6.9 Importing TLS server certificates and keys #Edit source

You can manage your CA certificates and keys for 389 Directory Server with the following

command line tools: certutil, openssl, and

pk12util.

For testing purposes, you can use the self-signed certificate that

dscreate creates when you create a new 389 DS

instance. Find the certificate at

/etc/dirsrv/slapd-INSTANCE-NAME/ca.crt.

For production environments, it is a best practice to use a third-party certificate authority, such as Let's Encrypt, CAcert.org, SSL.com, or whatever CA you choose. Request a server certificate, a client certificate, and a root certificate.

Before you can import an existing private key and certificate into the NSS

database, you need to create a bundle of the private key and the server

certificate. This results in a *.p12

file.

Important: *.p12 file and friendly name

When creating the PKCS12 bundle, you must encode Server-Cert

as the friendly name in the *.p12 file.

Otherwise the TLS connection will fail, because the 389 Directory Server searches for

this exact string.

The friendly name cannot be changed after you

import the *.p12 file into the NSS

database.

Use the following command to create the PKCS12 bundle with the required friendly name:

>sudoopenssl pkcs12 -export -in SERVER.crt\-inkey SERVER.key\-out SERVER.p12 -name Server-CertReplace SERVER.crt with the server certificate and SERVER.key with the private key to be bundled. Use

-outto specify the name of the*.p12file. Use-nameto set the friendly name, which must beServer-Cert.Before you can import the file into the NSS database, you need to obtain its password. The password is stored in the

pwdfile.txtfile in the/etc/dirsrv/slapd-INSTANCE-NAME/directory.Now import the SERVER.p12 file into your 389 DS NSS database:

>sudodsctl INSTANCE_NAME tls remove-cert Self-Signed-CA pk12util -i SERVER.p12\-d /etc/dirsrv/slapd-INSTANCE-NAME/cert9.db

6.10 Setting up replication #Edit source

389 Directory Server supports replicating its database content between multiple servers. According to the type of replication, this provides:

Faster performance and response times

Fault tolerance and failover

Load balancing

High availability

A database is the smallest unit of a directory that can be replicated. You can replicate an entire database, but not a subtree within a database. One database must correspond to one suffix. You cannot replicate a suffix that is distributed over two or more databases.

A replica that sends data to another replica is a supplier. A replica that receives data from a supplier is a consumer. Replication is always initiated by the supplier, and a single supplier can send data to multiple consumers. Usually the supplier is a read-write replica, and the consumer is read-only, except in the case of multi-supplier replication. In multi-supplier replication the suppliers are both suppliers and consumers of the same data.

6.10.1 Asynchronous writes #Edit source

389 DS manages replication differently than other databases. Replication is asynchronous, and eventually consistent. This means:

Any write or change to a single server is immediately accepted.

There is a delay between a write finishing on one server, and then replicating and being visible on other servers.

If that write conflicts with writes on other servers, it may be rolled back at some point in the future.

Not all servers may show identical content at the same time due to replication delay.

In general, as LDAP is "low-write", these factors mean that all servers are at least up to a common baseline of a known consistent state. Small changes occur on top of this baseline, so many of these aspects of delayed replication are not perceived in day to day usage.

6.10.2 Designing your topology #Edit source

Consider the following factors when you are designing your replication topology.

The need for replication: high availability, geo-location, read scaling, or a combination of all.

How many replicas (nodes, servers) you plan to have in your topology.

Direction of data flows, both inside of the topology, and data flowing into the topology.

How clients will balance across nodes of the topology for their requests (multiple ldap URIs, SRV records, load balancers).

These factors all affect how you may create your topology. (See Section 6.10.3, “Example replication topologies” for some topology examples.)

6.10.3 Example replication topologies #Edit source

The following sections provide examples of replication topologies, using two to six 389 Directory Server nodes. The maximum number of supported supplier replicas in a topology is twenty. Operational experience shows the optimal number for replication efficiency is a maximum of eight.

6.10.3.1 Two replicas #Edit source

Example 6.4: Two supplier replicas #

┌────┐ ┌────┐ │ S1 │◀─────▶│ S2 │ └────┘ └────┘

In Example 6.4, “Two supplier replicas” there are two replicas, S1 and S2, which replicate bi-directionally between each other, so they are both suppliers and consumers. S1 and S2 could be in separate data centers, or in the same data center. Clients can balance across the servers using LDAP URIs, a load balancer, or DNS SRV records. This is the simplest topology for high availability. Note that each server needs to be able to provide 100% of client load, in case the other server is offline for any reason. A two-node replication is generally not adequate for horizontal read scaling, as a single node will handle all read requests if the other node is offline.

Note: Default topology

The two-node topology should be considered the default topology, because it is the simplest to manage. You can expand your toplogy, over time, as necessary.

6.10.3.2 Four supplier replicas #Edit source

Example 6.5: Four supplier replicas #

┌────┐ ┌────┐ │ S1 │◀─────▶│ S2 │ └────┘ └────┘ ▲ ▲ │ │ ▼ ▼ ┌────┐ ┌────┐ │ S3 │◀─────▶│ S4 │ └────┘ └────┘

Example 6.5, “Four supplier replicas” has four supplier replicas, which all synchronize to each other. These could be in four datacenters, or two servers per datacenter. In the case of one node per data center, each node should be able to support 100% of client load. When there are two per datacenter, each one only needs to scale to 50% of the client load.

6.10.3.3 Six replicas #Edit source

Example 6.6: Six replicas #

┌────┐ ┌────┐

│ S1 │◀─────▶│ S2 │

└────┘ └────┘

▲ ▲

│ │

┌────────────┬────┴────────────┴─────┬────────────┐

│ │ │ │

▼ ▼ ▼ ▼

┌────┐ ┌────┐ ┌────┐ ┌────┐

│ S3 │◀─────▶│ S4 │ │ S5 │◀─────▶│ S6 │

└────┘ └────┘ └────┘ └────┘In Example 6.6, “Six replicas”, each pair is in a separate location. S1 and S2 are the suppliers, and S3, S4, S5, and S6 are consumers of S1 and S2. Each pair of servers replicate to each other. S3, S4, S5, and S6 can accept writes, though most of the replication is done through S1 and S2. This setup provides geographic separation for high availability and scaling.

6.10.3.4 Six replicas with read-only consumers #Edit source

Example 6.7: Six replicas with read-only consumers #

┌────┐ ┌────┐

│ S1 │◀─────▶│ S2 │

└────┘ └────┘

│ │

│ │

┌────────────┼────────────┼────────────┐

│ │ │ │

▼ ▼ ▼ ▼

┌────┐ ┌────┐ ┌────┐ ┌────┐

│ S3 │ │ S4 │ │ S5 │ │ S6 │

└────┘ └────┘ └────┘ └────┘In Example 6.7, “Six replicas with read-only consumers”, S1 and S2 are the suppliers, and the other four servers are read-only consumers. All changes occur on S1 and S2, and are propagated to the four replicas. Read-only consumers can be configured to store only a subset of the database, or partial entries, to limit data exposure. You could have a fractional read-only server in a DMZ, for example, so that if data is exposed, changes can not propagate back to the other replicas.

6.10.4 Terminology #Edit source

In the example topologies we have seen that 389 DS can take on a number of roles in a topology. The following list clarifies the terminology.

- Replica

An instance of 389 DS with an attached database.

- Read-write replica

A replica with a full copy of a database, that accepts read and write operations.

- Read-only replica

A replica with a full copy of a database, that only accepts read operations.

- Fractional read-only replica

A replica with a partial copy of a database, that only accepts read- only operations.

- Supplier

A replica that supplies data from its database to another replica.

- Consumer

A replica that receives data from another replica to write into its database.

- Replication agreement

The configuration of a server defining its supplier and consumer relation to another replica.

- Topology

A set of replicas connected via replication agreements.

- Replica ID

A unique identifier of the 389 Directory Server instance within the replication topology.

- Replication manager

An account with replication rights in the directory.

6.10.5 Configuring replication #Edit source

The first example sets up a two node bi-directional replication with a single read-only server, as a minimal starting example. In the following examples, the host names of the two read-write nodes are RW1 and RW2, and the read-only server is RO1. (Of course you must use your own host names.)

All servers should have a backend with an identical suffix. Only one server, RW1, needs an initial copy of the database.

6.10.5.1 Configuring two-node replication #Edit source

The following commands configure the read-write replicas in a two-node setup (Example 6.4, “Two supplier replicas”), with the hostnames RW1 and RW2. (Remember to use your own hostnames.)

Warning: Create a strong replication manager password

The replication manager should be considered equivalent to the directory manager, in terms of security and access, and should have a very strong password.

If you create different replication manager passwords for each server, be sure to keep track of which password belongs to which server. For example, when you configure the outbound connection in RW1's replication agreement, you need to set the replication manager password to the RW2 replication manager password.

First, configure RW1:

>sudodsconf INSTANCE-NAME replication create-manager>sudodsconf INSTANCE-NAME replication enable\--suffix dc=example,dc=com\--role supplier --replica-id 1 --bind-dn "cn=replication manager,cn=config"

Configure RW2:

>sudodsconf INSTANCE-NAME replication create-manager>sudodsconf INSTANCE-NAME replication enable\--suffix dc=example,dc=com\--role supplier --replica-id 2 --bind-dn "cn=replication manager,cn=config"

This will create the replication metadata required on RW1 and RW2. Note the

difference in the replica-id between the two servers. This

also creates the replication manager account, which is an account with

replication rights for authenticating between the two nodes.

RW1 and RW2 are now both configured to have replication metadata. The next step is to create the first agreement for outbound data from RW1 to RW2.

>sudodsconf INSTANCE-NAME repl-agmt create\--suffix dc=example,dc=com\--host=RW2 --port=636 --conn-protocol LDAPS --bind-dn "cn=replication manager,cn=config"\--bind-passwd PASSWORD --bind-method SIMPLE RW1_to_RW2

Data will not flow from RW1 to RW2 until after a full synchronization of the database, which is called an initialization or reinit. This will reset all database content on RW2 to match the content of RW1. Run the following command to trigger a reinit of the data:

>sudodsconf INSTANCE-NAME repl-agmt init\--suffix dc=example,dc=com RW1_to_RW2

Check the status by running this command on RW1:

>sudodsconf INSTANCE-NAME repl-agmt init-status\--suffix dc=example,dc=com RW1_to_RW2

When it is finished, you should see a "Agreement successfully initialized" message. If you get an error message, check the errors log. Otherwise, you should see the identical content from RW1 on RW2.

Finally, to make this bi-directional, configure a replication agreement from RW2 outbound to RW1:

>sudodsconf INSTANCE-NAME repl-agmt create\--suffix dc=example,dc=com\--host=RW1 --port=636 --conn-protocol LDAPS\--bind-dn "cn=replication manager,cn=config" --bind-passwd PASSWORD\--bind-method SIMPLE RW2_to_RW1

Changes made on either RW1 or RW2 will now be replicated to the other. Check replication status on either server with the following command:

>sudodsconf INSTANCE-NAME repl-agmt status\--suffix dc=example,dc=com\--bind-dn "cn=replication manager,cn=config"\--bind-passwd PASSWORD RW2_to_RW1

6.10.5.2 Configuring a read-only node #Edit source

To create a read-only node, start by creating the replication manager account and metadata. The hostname of the example server is RO3:

Warning: Create a strong replication manager password

The replication manager should be considered equivalent to the directory manager, in terms of security and access, and should have a very strong password.

If you create different replication manager passwords for each server, be sure to keep track of which password belongs to which server. For example, when you configure the outbound connection in RW1's replication agreement, you need to set the replication manager password to the RW2 replication manager password.

>sudodsconf INSTANCE_NAME replication create-manager>sudodsconf INSTANCE_NAME\replication enable --suffix dc=EXAMPLE,dc=COM\--role consumer --bind-dn "cn=replication manager,cn=config"

Note that for a read-only replica you do not provide a replica-id, and the

role is set to consumer. This allocates a

special read-only replica-id for all read-only replicas. After the read-only

replica is created, add the replication agreements from RW1 and RW2

to the read-only instance. The following example is on RW1:

>sudodsconf INSTANCE_NAME\repl-agmt create --suffix dc=EXAMPLE,dc=COM\--host=RO3 --port=636 --conn-protocol LDAPS\--bind-dn "cn=replication manager,cn=config" --bind-passwd PASSWORD--bind-method SIMPLE RW1_to_RO3

The following example, on RW2, configures the replication agreement between RW2 and RO3:

>sudodsconf INSTANCE_NAME repl-agmt create\--suffix dc=EXAMPLE,dc=COM\--host=RO3 --port=636 --conn-protocol LDAPS\--bind-dn "cn=replication manager,cn=config" --bind-passwd PASSWORD\--bind-method SIMPLE RW2_to_RO3

After these steps are completed, you can use either RW1 or RW2 to perform the initialization of the database on RO3. The following example initalizes RO3 from RW2:

>sudodsconf INSTANCE_NAME repl-agmt init--suffix dc=EXAMPLE,dc=COM RW2_to_RO3

6.10.6 Monitoring and healthcheck #Edit source

The dsconf command includes a monitoring option. You can check the status of each

replica status directly on the replicas, or from other hosts. The following example commands are run on RW1,

checking the status on two remote replicas, and then on itself:

>sudodsconf -D "cn=Directory Manager" ldap://RW2 replication monitor>sudodsconf -D "cn=Directory Manager" ldap://RO3 replication monitor>sudodsconf -D "cn=Directory Manager" ldap://RW1 replication monitor

The dsctl command has a healthcheck option. The following

example runs a replication healthcheck on the local 389 DS instance:

>sudodsctl INSTANCE_NAME healthcheck --check replication

Use the -v option for verbosity, to see what the healthcheck examines:

>sudodsctl -v INSTANCE_NAME healthcheck --check replication

Run dsctl INSTANCE_NAME healthcheck

with no options for a general health check.

Run the following command to see a list of the checks that healthcheck performs:

>sudodsctl INSTANCE_NAME healthcheck --list-checksconfig:hr_timestamp config:passwordscheme backends:userroot:cl_trimming backends:userroot:mappingtree backends:userroot:search backends:userroot:virt_attrs encryption:check_tls_version fschecks:file_perms [...]

You can run one or more of the individual checks:

>sudodsctl INSTANCE_NAME healthcheck\--check monitor-disk-space:disk_space tls:certificate_expiration

6.10.7 Making backups #Edit source

When replication is

enabled you need to adjust your 389 Directory Server backup strategy

(see Section 6.4, “Backing up and restoring 389 Directory Server” to learn about

making backups). If you are using

db2ldif you must add the --replication flag

to ensure that replication

metadata is backed up. You should backup all servers in the topology. When

restoring from backup, start by restoring a single node of the topology, then

reinitialize all other nodes as new instances.

6.10.8 Pausing and resuming replication #Edit source

You can pause replication during maintenance windows, or anytime you need to stop it. A node of the topology can only be offline for a maximum of days up to the limit of the changelog (see Section 6.10.9, “ Changelog max-age”).

Use the repl-agmt command to pause replication. The

following example is on RW2:

>sudodsconf INSTANCE_NAME repl-agmt disable\--suffix dc=EXAMPLE,dc=COM RW2_to_RW1

The following example re-enables replication:

>sudodsconf INSTANCE_NAME repl-agmt enable\--suffix dc=EXAMPLE,dc=COM RW2_to_RW1

6.10.9 Changelog max-age #Edit source

A replica can be offline for up to the length of time defined by

the changelog max-age option. max-age

defines the maximum age of any entry in the changelog. Any items older

than the max-age value are automatically removed.

After the replica comes back online it will synchronize with the other

replicas. If it is offline for longer than the max-age value,

the replica will need to be re-initialised, and will refuse to accept or

provide changes to other nodes, as they may be inconsistent. The following

example sets the max-age to seven days:

>sudodsconf INSTANCE_NAME\replication set-changelog --max-age 7d\--suffix dc=EXAMPLE,dc=COM

6.10.10 Removing a replica #Edit source

To remove a replica, first fence the node to prevent any incoming changes or reads. Then, find all servers that have incoming replication agreements with the node you are removing, and remove them. The following example removes RW2. Start by disabling the outbound replication agreement on RW1:

>sudodsconf INSTANCE_NAME repl-agmt delete\--suffix dc=EXAMPLE,dc=COM RW1_to_RW2

On the replica you are removing, which in the following example is RW2, remove all outbound agreements:

>sudodsconf INSTANCE_NAME repl-agmt delete\--suffix dc=EXAMPLE,dc=COM RW2_to_RW1>sudodsconf INSTANCE_NAME repl-agmt delete\--suffix dc=EXAMPLE,dc=COM RW2_to_RO3

Stop the instance on RW2:

>sudosystemctl stop dirsrv@INSTANCE_NAME.service

Then run the cleanallruv command to remove the replica ID from the topology.

The following example is run on RW1:

>sudodsconf INSTANCE_NAME repl-tasks cleanallruv\--suffix dc=EXAMPLE,dc=COM --replica-id 2>sudodsconf INSTANCE_NAME repl-tasks list-cleanruv-tasks

6.11 Synchronizing with Microsoft Active Directory #Edit source

389 Directory Server supports synchronizing some user and group content from Microsoft's Active Directory, so that Linux clients can use 389 DS for their identity information without the normally required domain join process. This also allows 389 DS to extend and use its other features with the data synchronised from Active Directory.

6.11.1 Planning your synchronization topology #Edit source

Due to how the synchronization works, only a single 389 Directory Server server and Active Directory server are involved. The Active Directory server must be a full Domain Controller, and not a Read Only Domain Controller (RODC). The Global Catalog is not required on the DC that is synchronized, as 389 DS only replicates the content of a single forest in a domain.

You must first chooose the direction of your data flow. There are three options: from AD to 389 DS, from 389 DS to AD, or bi- directional.

Note: No password synchronization

Passwords cannot be synchronised between 389 DS and Active Directory. This may change in the future, to support Active Directory to 389 DS password flow.

Your topology will look like the following diagram. The 389 Directory Server and Active Directory topologies may differ, but the most important factor is to have only a single connection between 389 DS and Active Directory. It is very important to account for this in your disaster recovery and backup plans for both 389 DS and AD, to ensure that you correctly restore only a single replication connection between these topologies.

┌────────┐ ┌────────┐ ┌────────┐ ┌────────┐

│ │ │ │ │ │ │ │

│ 389-ds │◀───▶│ 389-ds │◀ ─ ─ ─ ▶│ AD │◀───▶│ AD │

│ │ │ │ │ │ │ │

└────────┘ └────────┘ └────────┘ └────────┘

▲ ▲ ▲ ▲

│ │ │ │

▼ ▼ ▼ ▼

┌────────┐ ┌────────┐ ┌────────┐ ┌────────┐

│ │ │ │ │ │ │ │

│ 389-ds │◀───▶│ 389-ds │ │ AD │◀───▶│ AD │

│ │ │ │ │ │ │ │

└────────┘ └────────┘ └────────┘ └────────┘6.11.2 Prerequisites for Active Directory #Edit source

A security group that is granted the "Replicating Directory Changes" permission is required. For example, you have created a group named "Directory Server Sync". Follow the steps in the "How to grant the 'Replicating Directory Changes' permission for the Microsoft Metadirectory Services ADMA service account" (https://docs.microsoft.com/en-US/troubleshoot/windows-server/windows-security/grant-replicating-directory-changes-permission-adma-service to set this up.

Warning: Strong security needed

You should consider members of this group to be of equivalent security importance to Domain Administrators. Members of this group have the ability to read sensitive content from the Active Directory environment, so you should use strong, randomly-generated service account passwords for these accounts, and carefully audit membership to this group.

You should also create a service account that is a member of this group.

Your Active Directory environment must have certificates configured for LDAPS to ensure that authentication between 389 DS and AD is secure. Authentication with Generic Security Services API/Kerberos (GSSAPI/KRB) cannot be used.

6.11.3 Prerequisites for 389 Directory Server #Edit source

The 389 Directory Server server must have a backend database already configured with Organization Units (OUs) for entries to be synchronised into.

The 389 Directory Server server must have a replica ID configured as though the server is a read-write replica. (For details about setting up replication see Section 6.10, “Setting up replication”).

6.11.4 Creating an agreement from Active Directory to 389 Directory Server #Edit source

The following example command, which is run on the 389 Directory Server server, creates a replication agreement from Active Directory to 389 Directory Server:

>sudodsconf INSTANCE-NAME repl-winsync-agmt create --suffix dc=example,dc=com\--host AD-HOSTNAME --port 636 --conn-protocol LDAPS\ --bind-dn"cn=SERVICE-ACCOUNT,cn=USERS,dc=AD,dc=EXAMPLE,dc=COM"\--bind-passwd "PASSWORD" --win-subtree "cn=USERS,dc=AD,dc=EXAMPLE,dc=COM"\--ds-subtree ou=AD,dc=EXAMPLE,dc=COM --one-way-sync fromWindows\--sync-users=on --sync-groups=on --move-action delete\--win-domain AD-DOMAIN adsync_agreement

Once the agreement has been created, you must perform an initial resynchronization:

>sudodsconf INSTANCE-NAME repl-winsync-agmt init --suffix dc=example,dc=com adsync_agreement

Use the following command to check the status of the initialization:

>sudodsconf INSTANCE-NAME repl-winsync-agmt init-status --suffix dc=example,dc=com adsync_agreement

Note: Some entries are not synchronized

In some cases, an entry may not be synchronized, even if the init status reports success. Check your 389 DS log files in

/var/log/dirsrv/slapd-INSTANCE-NAME/errors.

Check the status of the agreement with the following command:

>sudodsconf INSTANCE-NAME repl-winsync-agmt status --suffix dc=example,dc=com adsync_agreement

Whe you are performing maintenance on the Active Directory or 389 Directory Server topology, you can pause the agreement with the following command:

>sudodsconf INSTANCE-NAME repl-winsync-agmt disable --suffix dc=example,dc=com adsync_agreement

Resume the agreement with the following command:

>sudodsconf INSTANCE-NAME repl-winsync-agmt enable --suffix dc=example,dc=com adsync_agreement

6.12 More information #Edit source

For more information about 389 Directory Server, see:

The upstream documentation at https://www.port389.org/docs/389ds/documentation.html.

man dsconf

man dsctl

man dsidm

man dscreate