Virtualization Guide

- Preface

- I Introduction

- II Managing virtual machines with

libvirt - III Hypervisor-independent features

- IV Managing virtual machines with Xen

- 22 Setting up a virtual machine host

- 23 Virtual networking

- 24 Managing a virtualization environment

- 25 Block devices in Xen

- 26 Virtualization: configuration options and settings

- 27 Administrative tasks

- 28 XenStore: configuration database shared between domains

- 29 Xen as a high-availability virtualization host

- 30 Xen: converting a paravirtual (PV) guest into a fully virtual (FV/HVM) guest

- V Managing virtual machines with QEMU

- VI Troubleshooting

- Glossary

- A Configuring GPU Pass-Through for NVIDIA cards

- B GNU licenses

8 Preparing the VM Host Server #Edit source

Before you can install guest virtual machines, you need to prepare the VM Host Server to provide the guests with the resources that they need for their operation. Specifically, you need to configure:

Networking so that guests can make use of the network connection provided the host.

A storage pool reachable from the host so that the guests can store their disk images.

8.1 Configuring networks #Edit source

There are two common network configurations to provide a VM Guest with a network connection:

A network bridge. This is the default and recommended way of providing the guests with network connection.

A virtual network with forwarding enabled.

8.1.1 Network bridge #Edit source

The network bridge configuration provides a Layer 2 switch for VM Guests, switching Layer 2 Ethernet packets between ports on the bridge based on MAC addresses associated with the ports. This gives the VM Guest Layer 2 access to the VM Host Server's network. This configuration is analogous to connecting the VM Guest's virtual Ethernet cable into a hub that is shared with the host and other VM Guests running on the host. The configuration is often referred to as shared physical device.

The network bridge configuration is the default configuration of openSUSE Leap when configured as a KVM or Xen hypervisor. It is the preferred configuration when you simply want to connect VM Guests to the VM Host Server's LAN.

Which tool to use to create the network bridge depends on the service you use to manage the network connection on the VM Host Server:

If a network connection is managed by

wicked, use either YaST or the command line to create the network bridge.wickedis the default on server hosts.If a network connection is managed by NetworkManager, use the NetworkManager command line tool

nmclito create the network bridge. NetworkManager is the default on desktop and laptops.

8.1.1.1 Managing network bridges with YaST #Edit source

This section includes procedures to add or remove network bridges with YaST.

8.1.1.1.1 Adding a network bridge #Edit source

To add a network bridge on VM Host Server, follow these steps:

Start › › .

Activate the tab and click .

Select from the list and enter the bridge device interface name in the entry. Click the button to proceed.

In the tab, specify networking details such as DHCP/static IP address, subnet mask or host name.

Using is only useful when also assigning a device to a bridge that is connected to a DHCP server.

If you intend to create a virtual bridge that has no connection to a real network device, use . In this case, it is a good idea to use addresses from the private IP address ranges, for example,

192.168.0.0/16,172.16.0.0/12, or10.0.0.0/8.To create a bridge that should only serve as a connection between the different guests without connection to the host system, set the IP address to

0.0.0.0and the subnet mask to255.255.255.255. The network scripts handle this special address as an unset IP address.Activate the tab and activate the network devices you want to include in the network bridge.

Click to return to the tab and confirm with . The new network bridge should now be active on VM Host Server.

8.1.1.1.2 Deleting a network bridge #Edit source

To delete an existing network bridge, follow these steps:

Start › › .

Select the bridge device you want to delete from the list in the tab.

Delete the bridge with and confirm with .

8.1.1.2 Managing network bridges from the command line #Edit source

This section includes procedures to add or remove network bridges using the command line.

8.1.1.2.1 Adding a network bridge #Edit source

To add a new network bridge device on VM Host Server, follow these steps:

Log in as

rooton the VM Host Server where you want to create a new network bridge.Choose a name for the new bridge—virbr_test in our example—and run

#ip link add name VIRBR_TEST type bridgeCheck if the bridge was created on VM Host Server:

#bridge vlan [...] virbr_test 1 PVID Egress Untaggedvirbr_testis present, but is not associated with any physical network interface.Bring the network bridge up and add a network interface to the bridge:

#ip link set virbr_test up#ip link set eth1 master virbr_testImportant: Network interface must be unused

You can only assign a network interface that is not yet used by another network bridge.

Optionally, enable STP (see Spanning Tree Protocol):

#bridge link set dev virbr_test cost 4

8.1.1.2.2 Deleting a network bridge #Edit source

To delete an existing network bridge device on VM Host Server from the command line, follow these steps:

Log in as

rooton the VM Host Server where you want to delete an existing network bridge.List existing network bridges to identify the name of the bridge to remove:

#bridge vlan [...] virbr_test 1 PVID Egress UntaggedDelete the bridge:

#ip link delete dev virbr_test

8.1.1.3 Adding a network bridge with nmcli #Edit source

This section includes procedures to add a network bridge with NetworkManager's

command line tool nmcli.

List active network connections:

>sudonmcli connection show --active NAME UUID TYPE DEVICE Ethernet connection 1 84ba4c22-0cfe-46b6-87bb-909be6cb1214 ethernet eth0Add a new bridge device named

br0and verify its creation:>sudonmcli connection add type bridge ifname br0 Connection 'bridge-br0' (36e11b95-8d5d-4a8f-9ca3-ff4180eb89f7) \ successfully added.>sudonmcli connection show --active NAME UUID TYPE DEVICE bridge-br0 36e11b95-8d5d-4a8f-9ca3-ff4180eb89f7 bridge br0 Ethernet connection 1 84ba4c22-0cfe-46b6-87bb-909be6cb1214 ethernet eth0Optionally, you can view the bridge settings:

>sudonmcli -f bridge connection show bridge-br0 bridge.mac-address: -- bridge.stp: yes bridge.priority: 32768 bridge.forward-delay: 15 bridge.hello-time: 2 bridge.max-age: 20 bridge.ageing-time: 300 bridge.group-forward-mask: 0 bridge.multicast-snooping: yes bridge.vlan-filtering: no bridge.vlan-default-pvid: 1 bridge.vlans: --Link the bridge device to the physical Ethernet device

eth0:>sudonmcli connection add type bridge-slave ifname eth0 master br0Disable the

eth0interface and enable the new bridge:>sudonmcli connection down "Ethernet connection 1">sudonmcli connection up bridge-br0 Connection successfully activated (master waiting for slaves) \ (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/9)

8.1.1.4 Using VLAN interfaces #Edit source

Sometimes it is necessary to create a private connection either between two VM Host Servers or between VM Guest systems. For example, to migrate a VM Guest to hosts in a different network segment. Or to create a private bridge that only VM Guest systems may connect to (even when running on different VM Host Server systems). An easy way to build such connections is to set up VLAN networks.

VLAN interfaces are commonly set up on the VM Host Server. They either interconnect the different VM Host Server systems, or they may be set up as a physical interface to an otherwise virtual-only bridge. It is even possible to create a bridge with a VLAN as a physical interface that has no IP address in the VM Host Server. That way, the guest systems have no possibility to access the host over this network.

Run the YaST module › . Follow this procedure to set up the VLAN device:

Procedure 8.1: Setting up VLAN interfaces with YaST #

Click to create a new network interface.

In the , select .

Change the value of to the ID of your VLAN. Be aware VLAN ID

1is commonly used for management purposes.Click .

Select the interface that the VLAN device should connect to below . If the desired interface does not appear in the list, first set up this interface without an IP address.

Select the desired method for assigning an IP address to the VLAN device.

Click to finish the configuration.

It is also possible to use the VLAN interface as a physical interface of a bridge. This makes it possible to connect several VM Host Server-only networks and allows live migration of VM Guest systems that are connected to such a network.

YaST does not always allow setting no IP address. However, this may

be a desired feature, especially if VM Host Server-only networks should be

connected. In this case, use the special address

0.0.0.0 with netmask

255.255.255.255. The system scripts handle this

address as no IP address set.

8.1.2 Virtual networks #Edit source

libvirt-managed virtual networks are similar to bridged networks, but

typically have no Layer 2 connection to the VM Host Server. Connectivity to

the VM Host Server's physical network is accomplished with Layer 3

forwarding, which introduces additional packet processing on the

VM Host Server as compared to a Layer 2 bridged network. Virtual networks

also provide DHCP and DNS services for VM Guests. For more information

on libvirt virtual networks, see the Network XML

format documentation at

https://libvirt.org/formatnetwork.html.

A standard libvirt installation on openSUSE Leap already comes with a

predefined virtual network named default. It

provides DHCP and DNS services for the network, along with connectivity

to the VM Host Server's physical network using the network address

translation (NAT) forwarding mode. Although it is predefined, the

default virtual network needs to be explicitly

enabled by the administrator. For more information on the forwarding

modes supported by libvirt, see the

Connectivity section of the Network

XML format documentation at

https://libvirt.org/formatnetwork.html#elementsConnect.

libvirt-managed virtual networks can be used to satisfy a wide range

of use cases, but are commonly used on VM Host Servers that have a wireless

connection or dynamic/sporadic network connectivity, such as laptops.

Virtual networks are also useful when the VM Host Server's network has

limited IP addresses, allowing forwarding of packets between the

virtual network and the VM Host Server's network. However, most server use

cases are better suited for the network bridge configuration, where

VM Guests are connected to the VM Host Server's LAN.

Warning: Enabling forwarding mode

Enabling forwarding mode in a libvirt virtual network enables

forwarding in the VM Host Server by setting

/proc/sys/net/ipv4/ip_forward and

/proc/sys/net/ipv6/conf/all/forwarding to 1,

which turns the VM Host Server into a router. Restarting the VM Host Server's

network may reset the values and disable forwarding. To avoid this

behavior, explicitly enable forwarding in the VM Host Server by editing the

/etc/sysctl.conf file and adding:

net.ipv4.ip_forward = 1

net.ipv6.conf.all.forwarding = 1

8.1.2.1 Managing virtual networks with Virtual Machine Manager #Edit source

You can define, configure and operate virtual networks with Virtual Machine Manager.

8.1.2.1.1 Defining virtual networks #Edit source

Start Virtual Machine Manager. In the list of available connections, right-click the name of the connection for which you need to configure the virtual network, and then select .

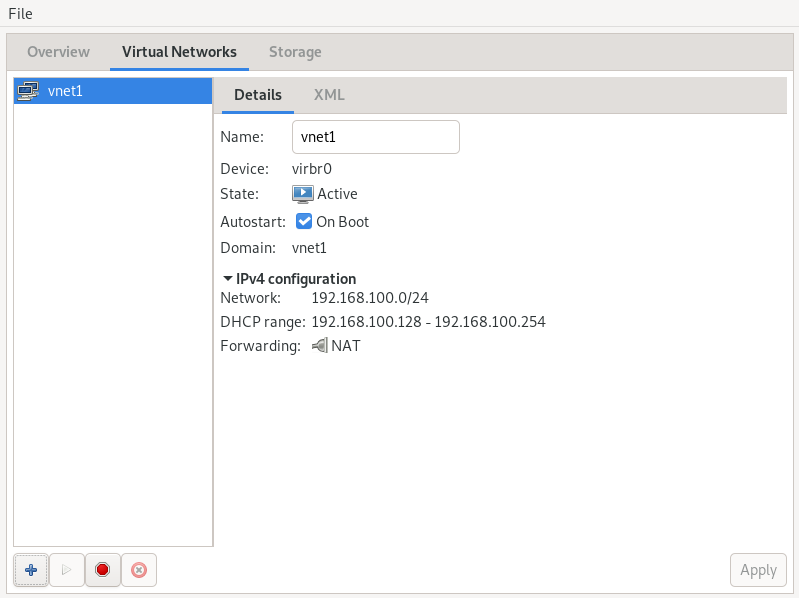

In the window, click the tab. You can see the list of all virtual networks available for the current connection. On the right, there are details of the selected virtual network.

Figure 8.1: Connection details #

To add a new virtual network, click .

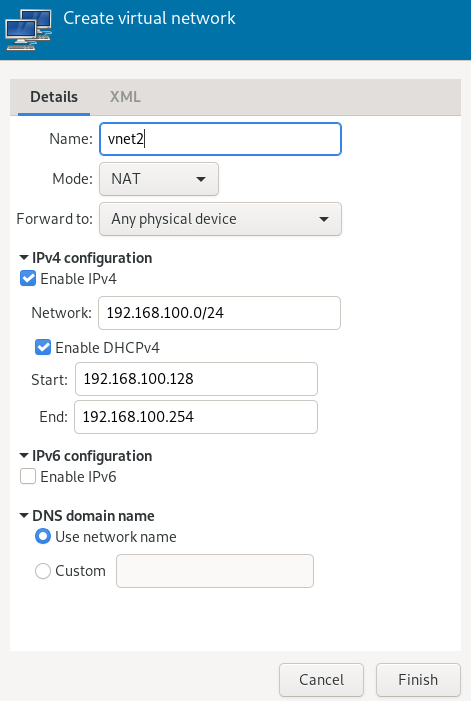

Specify a name for the new virtual network.

Figure 8.2: Create virtual network #

Specify the networking mode. For the and types, you can specify to which device to forward network communications. While (network address translation) remaps the virtual network address space and allows sharing a single IP address, forwards packets from the virtual network to the VM Host Server's physical network with no translation.

If you need IPv4 networking, activate and specify the IPv4 network address. If you need a DHCP server, activate and specify the assignable IP address range.

If you need IPv6 networking, activate and specify the IPv6 network address. If you need a DHCP server, activate and specify the assignable IP address range.

To specify a different domain name than the name of the virtual network, select under and enter it here.

Click to create the new virtual network. On the VM Host Server, a new virtual network bridge

virbrXis available, which corresponds to the newly created virtual network. You can check withbridge link.libvirtautomatically adds iptables rules to allow traffic to/from guests attached to the new virbrX device.

8.1.2.1.2 Starting virtual networks #Edit source

To start a virtual network that is temporarily stopped, follow these steps:

Start Virtual Machine Manager. In the list of available connections, right-click the name of the connection for which you need to configure the virtual network, and then select .

In the window, click the tab. You can see the list of all virtual networks available for the current connection.

To start the virtual network, click .

8.1.2.1.3 Stopping virtual networks #Edit source

To stop an active virtual network, follow these steps:

Start Virtual Machine Manager. In the list of available connections, right-click the name of the connection for which you need to configure the virtual network, and then select .

In the window, click the tab. You can see the list of all virtual networks available for the current connection.

Select the virtual network to be stopped, then click .

8.1.2.1.4 Deleting virtual networks #Edit source

To delete a virtual network from VM Host Server, follow these steps:

Start Virtual Machine Manager. In the list of available connections, right-click the name of the connection for which you need to configure the virtual network, and then select .

In the window, click the tab. You can see the list of all virtual networks available for the current connection.

Select the virtual network to be deleted, then click .

8.1.2.1.5 Obtaining IP addresses with nsswitch for NAT networks (in KVM) #Edit source

On VM Host Server, install libvirt-nss, which provides NSS support for libvirt:

>sudozypper in libvirt-nssAdd

libvirtto/etc/nsswitch.conf:... hosts: files libvirt mdns_minimal [NOTFOUND=return] dns ...

If NSCD is running, restart it:

>sudosystemctl restart nscd

Now you can reach the guest system by name from the host.

The NSS module has limited functionality. It reads

/var/lib/libvirt/dnsmasq/*.status files to

find the host name and corresponding IP addresses in a JSON record

describing each lease provided by dnsmasq. Host

name translation can only be done on those VM Host Servers using a

libvirt-managed bridged network backed by

dnsmasq.

8.1.2.2 Managing virtual networks with virsh #Edit source

You can manage libvirt-provided virtual networks with the

virsh command line tool. To view all network

related virsh commands, run

>sudovirsh help network Networking (help keyword 'network'): net-autostart autostart a network net-create create a network from an XML file net-define define (but don't start) a network from an XML file net-destroy destroy (stop) a network net-dumpxml network information in XML net-edit edit XML configuration for a network net-event Network Events net-info network information net-list list networks net-name convert a network UUID to network name net-start start a (previously defined) inactive network net-undefine undefine an inactive network net-update update parts of an existing network's configuration net-uuid convert a network name to network UUID

To view brief help information for a specific

virsh command, run virsh help

VIRSH_COMMAND:

>sudovirsh help net-create NAME net-create - create a network from an XML file SYNOPSIS net-create <file> DESCRIPTION Create a network. OPTIONS [--file] <string> file containing an XML network description

8.1.2.2.1 Creating a network #Edit source

To create a new running virtual network, run

>sudovirsh net-create VNET_DEFINITION.xml

The VNET_DEFINITION.xml XML file

includes the definition of the virtual network that libvirt

accepts.

To define a new virtual network without activating it, run

>sudovirsh net-define VNET_DEFINITION.xml

The following examples illustrate definitions of different types of virtual networks.

Example 8.1: NAT-based network #

The following configuration allows VM Guests outgoing connectivity if it is available on the VM Host Server. Without VM Host Server networking, it allows guests to talk directly to each other.

<network> <name>vnet_nated</name>1 <bridge name="virbr1"/>2 <forward mode="nat"/>3 <ip address="192.168.122.1" netmask="255.255.255.0">4 <dhcp> <range start="192.168.122.2" end="192.168.122.254"/>5 <host mac="52:54:00:c7:92:da" name="host1.testing.com" \ ip="192.168.1.101"/>6 <host mac="52:54:00:c7:92:db" name="host2.testing.com" \ ip="192.168.1.102"/> <host mac="52:54:00:c7:92:dc" name="host3.testing.com" \ ip="192.168.1.103"/> </dhcp> </ip> </network>

The name of the new virtual network. | |

The name of the bridge device used to construct the virtual

network. When defining a new network with a <forward>

mode of | |

Inclusion of the <forward> element indicates that the

virtual network is connected to the physical LAN. The

| |

The IP address and netmask for the network bridge. | |

Enable DHCP server for the virtual network, offering IP

addresses ranging from the specified | |

The optional <host> elements specify hosts that are

given names and predefined IP addresses by the built-in DHCP

server. Any IPv4 host element must specify the following: the

MAC address of the host to be assigned a given name, the IP

to be assigned to that host, and the name to be given to that

host by the DHCP server. An IPv6 host element differs

slightly from that for IPv4: there is no

|

Example 8.2: Routed network #

The following configuration routes traffic from the virtual network to the LAN without applying any NAT. The IP address range must be preconfigured in the routing tables of the router on the VM Host Server network.

<network>

<name>vnet_routed</name>

<bridge name="virbr1"/>

<forward mode="route" dev="eth1"/>1

<ip address="192.168.122.1" netmask="255.255.255.0">

<dhcp>

<range start="192.168.122.2" end="192.168.122.254"/>

</dhcp>

</ip>

</network>

The guest traffic may only go out via the

|

Example 8.3: Isolated network #

This configuration provides an isolated private network. The guests can talk to each other, and to VM Host Server, but cannot reach any other machines on the LAN, as the <forward> element is missing in the XML description.

<network> <name>vnet_isolated</name> <bridge name="virbr3"/> <ip address="192.168.152.1" netmask="255.255.255.0"> <dhcp> <range start="192.168.152.2" end="192.168.152.254"/> </dhcp> </ip> </network>

Example 8.4: Using an existing bridge on VM Host Server #

This configuration shows how to use an existing VM Host Server's

network bridge br0. VM Guests are directly

connected to the physical network. Their IP addresses are all on

the subnet of the physical network, and there are no restrictions

on incoming or outgoing connections.

<network>

<name>host-bridge</name>

<forward mode="bridge"/>

<bridge name="br0"/>

</network>8.1.2.2.2 Listing networks #Edit source

To list all virtual networks available to libvirt, run:

>sudovirsh net-list --all Name State Autostart Persistent ---------------------------------------------------------- crowbar active yes yes vnet_nated active yes yes vnet_routed active yes yes vnet_isolated inactive yes yes

To list available domains, run:

>sudovirsh list Id Name State ---------------------------------------------------- 1 nated_sles12sp3 running ...

To get a list of interfaces of a running domain, run

domifaddr DOMAIN, or

optionally specify the interface to limit the output to this

interface. By default, it additionally outputs their IP and MAC

addresses:

>sudovirsh domifaddr nated_sles12sp3 --interface vnet0 --source lease Name MAC address Protocol Address ------------------------------------------------------------------------------- vnet0 52:54:00:9e:0d:2b ipv6 fd00:dead:beef:55::140/64 - - ipv4 192.168.100.168/24

To print brief information of all virtual interfaces associated with the specified domain, run:

>sudovirsh domiflist nated_sles12sp3 Interface Type Source Model MAC --------------------------------------------------------- vnet0 network vnet_nated virtio 52:54:00:9e:0d:2b

8.1.2.2.3 Getting details about a network #Edit source

To get detailed information about a network, run:

>sudovirsh net-info vnet_routed Name: vnet_routed UUID: 756b48ff-d0c6-4c0a-804c-86c4c832a498 Active: yes Persistent: yes Autostart: yes Bridge: virbr5

8.1.2.2.4 Starting a network #Edit source

To start an inactive network that was already defined, find its name (or unique identifier, UUID) with:

>sudovirsh net-list --inactive Name State Autostart Persistent ---------------------------------------------------------- vnet_isolated inactive yes yes

Then run:

>sudovirsh net-start vnet_isolated Network vnet_isolated started

8.1.2.2.5 Stopping a network #Edit source

To stop an active network, find its name (or unique identifier, UUID) with:

>sudovirsh net-list --inactive Name State Autostart Persistent ---------------------------------------------------------- vnet_isolated active yes yes

Then run:

>sudovirsh net-destroy vnet_isolated Network vnet_isolated destroyed

8.1.2.2.6 Removing a network #Edit source

To remove the definition of an inactive network from VM Host Server permanently, run:

>sudovirsh net-undefine vnet_isolated Network vnet_isolated has been undefined

8.2 Configuring a storage pool #Edit source

When managing a VM Guest on the VM Host Server itself, you can access the

complete file system of the VM Host Server to attach or create virtual hard

disks or to attach existing images to the VM Guest. However, this is not

possible when managing VM Guests from a remote host. For this reason,

libvirt supports so called “Storage Pools”, which can be

accessed from remote machines.

Tip: CD/DVD ISO images

To be able to access CD/DVD ISO images on the VM Host Server from remote clients, they also need to be placed in a storage pool.

libvirt knows two different types of storage: volumes and pools.

- Storage volume

A storage volume is a storage device that can be assigned to a guest—a virtual disk or a CD/DVD/floppy image. Physically, it can be a block device—for example, a partition or a logical volume—or a file on the VM Host Server.

- Storage pool

A storage pool is a storage resource on the VM Host Server that can be used for storing volumes, similar to network storage for a desktop machine. Physically it can be one of the following types:

- File system directory ()

A directory for hosting image files. The files can be either one of the supported disk formats (raw or qcow2), or ISO images.

- Physical disk device ()

Use a complete physical disk as storage. A partition is created for each volume that is added to the pool.

- Pre-formatted block device ()

Specify a partition to be used in the same way as a file system directory pool (a directory for hosting image files). The only difference to using a file system directory is that

libvirttakes care of mounting the device.- iSCSI target (iscsi)

Set up a pool on an iSCSI target. You need to have been logged in to the volume once before to use it with

libvirt. Use the YaST to detect and log in to a volume. Volume creation on iSCSI pools is not supported; instead, each existing Logical Unit Number (LUN) represents a volume. Each volume/LUN also needs a valid (empty) partition table or disk label before you can use it. If missing, usefdiskto add it:>sudofdisk -cu /dev/disk/by-path/ip-192.168.2.100:3260-iscsi-iqn.2010-10.com.example:[...]-lun-2 Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel Building a new DOS disklabel with disk identifier 0xc15cdc4e. Changes will remain in memory only, until you decide to write them. After that, of course, the previous content won't be recoverable. Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite) Command (m for help): w The partition table has been altered! Calling ioctl() to re-read partition table. Syncing disks.- LVM volume group (logical)

Use an LVM volume group as a pool. You can either use a predefined volume group, or create a group by specifying the devices to use. Storage volumes are created as partitions on the volume.

Warning: Deleting the LVM-based pool

When the LVM-based pool is deleted in the Storage Manager, the volume group is deleted as well. This results in a non-recoverable loss of all data stored on the pool.

- Multipath devices ()

At the moment, multipathing support is limited to assigning existing devices to the guests. Volume creation or configuring multipathing from within

libvirtis not supported.- Network exported directory ()

Specify a network directory to be used in the same way as a file system directory pool (a directory for hosting image files). The only difference to using a file system directory is that

libvirttakes care of mounting the directory. The supported protocol is NFS.- SCSI host adapter ()

Use an SCSI host adapter in almost the same way as an iSCSI target. We recommend to use a device name from

/dev/disk/by-*rather than/dev/sdX. The latter can change (for example, when adding or removing hard disks). Volume creation on iSCSI pools is not supported. Instead, each existing LUN (Logical Unit Number) represents a volume.

Warning: Security considerations

To avoid data loss or data corruption, do not attempt to use resources

such as LVM volume groups, iSCSI targets, etc., that are also used to

build storage pools on the VM Host Server. There is no need to connect to

these resources from the VM Host Server or to mount them on the

VM Host Server—libvirt takes care of this.

Do not mount partitions on the VM Host Server by label. Under certain circumstances it is possible that a partition is labeled from within a VM Guest with a name existing on the VM Host Server.

8.2.1 Managing storage with virsh #Edit source

Managing storage from the command line is also possible by using

virsh. However, creating storage pools is currently

not supported by SUSE. Therefore, this section is restricted to

documenting functions such as starting, stopping and deleting pools,

and volume management.

A list of all virsh subcommands for managing pools

and volumes is available by running virsh help pool

and virsh help volume, respectively.

8.2.1.1 Listing pools and volumes #Edit source

List all pools currently active by executing the following command.

To also list inactive pools, add the option --all:

> virsh pool-list --details

Details about a specific pool can be obtained with the

pool-info subcommand:

> virsh pool-info POOLBy default, volumes can only be listed per pool. To list all volumes from a pool, enter the following command.

> virsh vol-list --details POOL

At the moment virsh offers no tools to show

whether a volume is used by a guest or not. The following procedure

describes a way to list volumes from all pools that are currently

used by a VM Guest.

Procedure 8.2: Listing all storage volumes currently used on a VM Host Server #

Create an XSLT stylesheet by saving the following content to a file, for example, ~/libvirt/guest_storage_list.xsl:

<?xml version="1.0" encoding="UTF-8"?> <xsl:stylesheet version="1.0" xmlns:xsl="http://www.w3.org/1999/XSL/Transform"> <xsl:output method="text"/> <xsl:template match="text()"/> <xsl:strip-space elements="*"/> <xsl:template match="disk"> <xsl:text> </xsl:text> <xsl:value-of select="(source/@file|source/@dev|source/@dir)[1]"/> <xsl:text> </xsl:text> </xsl:template> </xsl:stylesheet>Run the following commands in a shell. It is assumed that the guest's XML definitions are all stored in the default location (

/etc/libvirt/qemu).xsltprocis provided by the packagelibxslt.SSHEET="$HOME/libvirt/guest_storage_list.xsl" cd /etc/libvirt/qemu for FILE in *.xml; do basename $FILE .xml xsltproc $SSHEET $FILE done

8.2.1.2 Starting, stopping, and deleting pools #Edit source

Use the virsh pool subcommands to start, stop or

delete a pool. Replace POOL with the

pool's name or its UUID in the following examples:

- Stopping a pool

>virsh pool-destroy POOLNote: A pool's state does not affect attached volumes

Volumes from a pool attached to VM Guests are always available, regardless of the pool's state ( (stopped) or (started)). The state of the pool solely affects the ability to attach volumes to a VM Guest via remote management.

- Deleting a pool

>virsh pool-delete POOL- Starting a pool

>virsh pool-start POOL- Enable autostarting a pool

>virsh pool-autostart POOLOnly pools that are marked to autostart are automatically started if the VM Host Server reboots.

- Disable autostarting a pool

>virsh pool-autostart POOL --disable

8.2.1.3 Adding volumes to a storage pool #Edit source

virsh offers two ways to add volumes to storage

pools: either from an XML definition with

vol-create and vol-create-from

or via command line arguments with vol-create-as.

The first two methods are currently not supported by SUSE,

therefore this section focuses on the subcommand

vol-create-as.

To add a volume to an existing pool, enter the following command:

> virsh vol-create-as POOL1NAME2 12G --format3raw|qcow24 --allocation 4G5Name of the pool to which the volume should be added | |

Name of the volume | |

Size of the image, in this example 12 gigabytes. Use the suffixes k, M, G, T for kilobyte, megabyte, gigabyte, and terabyte, respectively. | |

Format of the volume. SUSE currently supports

| |

Optional parameter. By default,

When not specifying this parameter, a sparse image file with no

allocation is generated. To create a non-sparse volume, specify

the whole image size with this parameter (would be

|

8.2.1.3.1 Cloning existing volumes #Edit source

Another way to add volumes to a pool is to clone an existing volume. The new instance is always created in the same pool as the original.

> virsh vol-clone NAME_EXISTING_VOLUME1NAME_NEW_VOLUME2 --pool POOL38.2.1.4 Deleting volumes from a storage pool #Edit source

To permanently delete a volume from a pool, use the subcommand

vol-delete:

> virsh vol-delete NAME --pool POOL

--pool is optional. libvirt tries to locate the

volume automatically. If that fails, specify this parameter.

Warning: No checks upon volume deletion

A volume is deleted in any case, regardless of whether it is currently used in an active or inactive VM Guest. There is no way to recover a deleted volume.

Whether a volume is used by a VM Guest can only be detected by using by the method described in Procedure 8.2, “Listing all storage volumes currently used on a VM Host Server”.

8.2.1.5 Attaching volumes to a VM Guest #Edit source

After you create a volume as described in Section 8.2.1.3, “Adding volumes to a storage pool”, you can attach it to a virtual machine and use it as a hard disk:

> virsh attach-disk DOMAIN SOURCE_IMAGE_FILE TARGET_DISK_DEVICEFor example:

> virsh attach-disk sles12sp3 /virt/images/example_disk.qcow2 sda2

To check if the new disk is attached, inspect the result of the

virsh dumpxml command:

# virsh dumpxml sles12sp3

[...]

<disk type='file' device='disk'>

<driver name='qemu' type='raw'/>

<source file='/virt/images/example_disk.qcow2'/>

<backingStore/>

<target dev='sda2' bus='scsi'/>

<alias name='scsi0-0-0'/>

<address type='drive' controller='0' bus='0' target='0' unit='0'/>

</disk>

[...]8.2.1.5.1 Hotplug or persistent change #Edit source

You can attach disks to both active and inactive domains. The

attachment is controlled by the --live and

--config options:

--liveHotplugs the disk to an active domain. The attachment is not saved in the domain configuration. Using

--liveon an inactive domain is an error.--configChanges the domain configuration persistently. The attached disk is then available after the next domain start.

--live--configHotplugs the disk and adds it to the persistent domain configuration.

Tip: virsh attach-device

virsh attach-device is the more generic form

of virsh attach-disk. You can use it to attach

other types of devices to a domain.

8.2.1.6 Detaching volumes from a VM Guest #Edit source

To detach a disk from a domain, use virsh

detach-disk:

# virsh detach-disk DOMAIN TARGET_DISK_DEVICEFor example:

# virsh detach-disk sles12sp3 sda2

You can control the attachment with the --live and

--config options as described in

Section 8.2.1.5, “Attaching volumes to a VM Guest”.

8.2.2 Managing storage with Virtual Machine Manager #Edit source

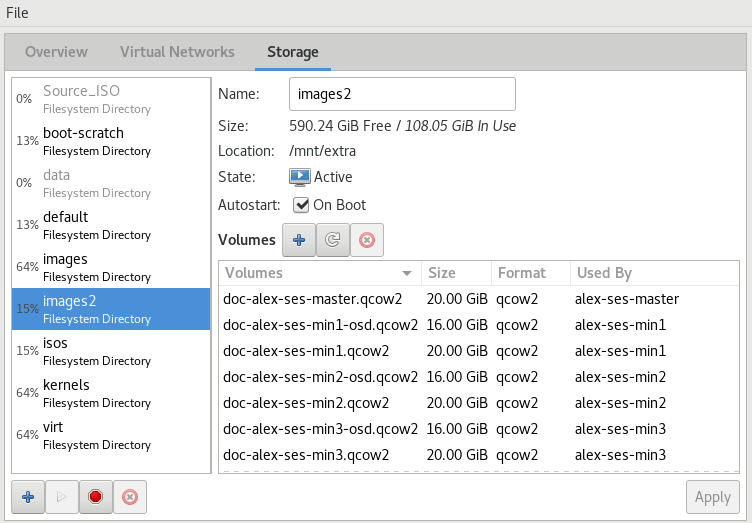

The Virtual Machine Manager provides a graphical interface—the Storage Manager—to manage storage volumes and pools. To access it, either right-click a connection and choose , or highlight a connection and choose › . Select the tab.

8.2.2.1 Adding a storage pool #Edit source

To add a storage pool, proceed as follows:

Click in the bottom left corner. The dialog appears.

Provide a for the pool (consisting of only alphanumeric characters and

_,-or.) and select a .Specify the required details below. They depend on the type of pool you are creating.

Important

ZFS pools are not supported.

- Type

: specify an existing directory.

- Type

: format of the device's partition table. Using should normally work. If not, get the required format by running the command

parted-lon the VM Host Server.: path to the device. It is recommended to use a device name from

/dev/disk/by-*rather than the simple/dev/sdX, since the latter can change (for example, when adding or removing hard disks). You need to specify the path that resembles the whole disk, not a partition on the disk (if existing).

- Type

: mount point on the VM Host Server file system.

file system format of the device. The default value

autoshould work.: path to the device file. It is recommended to use a device name from

/dev/disk/by-*rather than/dev/sdX, because the latter can change (for example, when adding or removing hard disks).

- Type

Get the necessary data by running the following command on the VM Host Server:

>sudoiscsiadm --mode nodeIt returns a list of iSCSI volumes with the following format. The elements in bold text are required:

IP_ADDRESS:PORT,TPGT TARGET_NAME_(IQN)

: the directory containing the device file. Use

/dev/disk/by-path(default) or/dev/disk/by-id.: host name or IP address of the iSCSI server.

: the iSCSI target name (iSCSI Qualified Name).

: the iSCSI initiator name.

- Type

: specify the device path of an existing volume group.

- Type

: support for multipathing is currently limited to making all multipath devices available. Therefore, specify an arbitrary string here. The path is required, otherwise the XML parser fails.

- Type

: mount point on the VM Host Server file system.

: IP address or host name of the server exporting the network file system.

: directory on the server that is being exported.

- Type

: host name of the server with an exported RADOS block device.

: name of the RADOS block device on the server.

- Type

: directory containing the device file. Use

/dev/disk/by-path(default) or/dev/disk/by-id.: name of the SCSI adapter.

Note: File browsing

Using the file browser by clicking is not possible when operating remotely.

Click to add the storage pool.

8.2.2.2 Managing storage pools #Edit source

Virtual Machine Manager's Storage Manager lets you create or delete volumes in a pool. You may also temporarily deactivate or permanently delete existing storage pools. Changing the basic configuration of a pool is currently not supported by SUSE.

8.2.2.2.1 Starting, stopping, and deleting pools #Edit source

The purpose of storage pools is to provide block devices located on the VM Host Server that can be added to a VM Guest when managing it from remote. To make a pool temporarily inaccessible from remote, click in the bottom left corner of the Storage Manager. Stopped pools are marked with and are grayed out in the list pane. By default, a newly created pool is automatically started of the VM Host Server.

To start an inactive pool and make it available from remote again, click in the bottom left corner of the Storage Manager.

Note: A pool's state does not affect attached volumes

Volumes from a pool attached to VM Guests are always available, regardless of the pool's state ( (stopped) or (started)). The state of the pool solely affects the ability to attach volumes to a VM Guest via remote management.

To permanently make a pool inaccessible, click in the bottom left corner of the Storage Manager. You can only delete inactive pools. Deleting a pool does not physically erase its contents on VM Host Server—it only deletes the pool configuration. However, you need to be extra careful when deleting pools, especially when deleting LVM volume group-based tools:

Warning: Deleting storage pools

Deleting storage pools based on local file system directories, local partitions or disks has no effect on the availability of volumes from these pools currently attached to VM Guests.

Volumes located in pools of type iSCSI, SCSI, LVM group or Network Exported Directory become inaccessible from the VM Guest if the pool is deleted. Although the volumes themselves are not deleted, the VM Host Server can no longer access the resources.

Volumes on iSCSI/SCSI targets or Network Exported Directory become accessible again when creating an adequate new pool or when mounting/accessing these resources directly from the host system.

When deleting an LVM group-based storage pool, the LVM group definition is erased and the LVM group no longer exists on the host system. The configuration is not recoverable and all volumes from this pool are lost.

8.2.2.2.2 Adding volumes to a storage pool #Edit source

Virtual Machine Manager lets you create volumes in all storage pools, except in pools

of types Multipath, iSCSI or SCSI. A volume in these pools is

equivalent to a LUN and cannot be changed from within libvirt.

A new volume can either be created using the Storage Manager or while adding a new storage device to a VM Guest. In either case, select a storage pool from the left panel, then click .

Specify a for the image and choose an image format.

SUSE currently only supports

raworqcow2images. The latter option is not available on LVM group-based pools.Next to , specify the maximum size that the disk image is allowed to reach. Unless you are working with a

qcow2image, you can also set an amount for that should be allocated initially. If the two values differ, a sparse image file is created, which grows on demand.For

qcow2images, you can use a (also called “backing file”), which constitutes a base image. The newly createdqcow2image then only records the changes that are made to the base image.Start the volume creation by clicking .

8.2.2.2.3 Deleting volumes from a storage pool #Edit source

Deleting a volume can only be done from the Storage Manager, by selecting a volume and clicking . Confirm with .

Warning: Volumes can be deleted even while in use

Volumes can be deleted even if they are currently used in an active or inactive VM Guest. There is no way to recover a deleted volume.

Whether a volume is used by a VM Guest is indicated in the column in the Storage Manager.